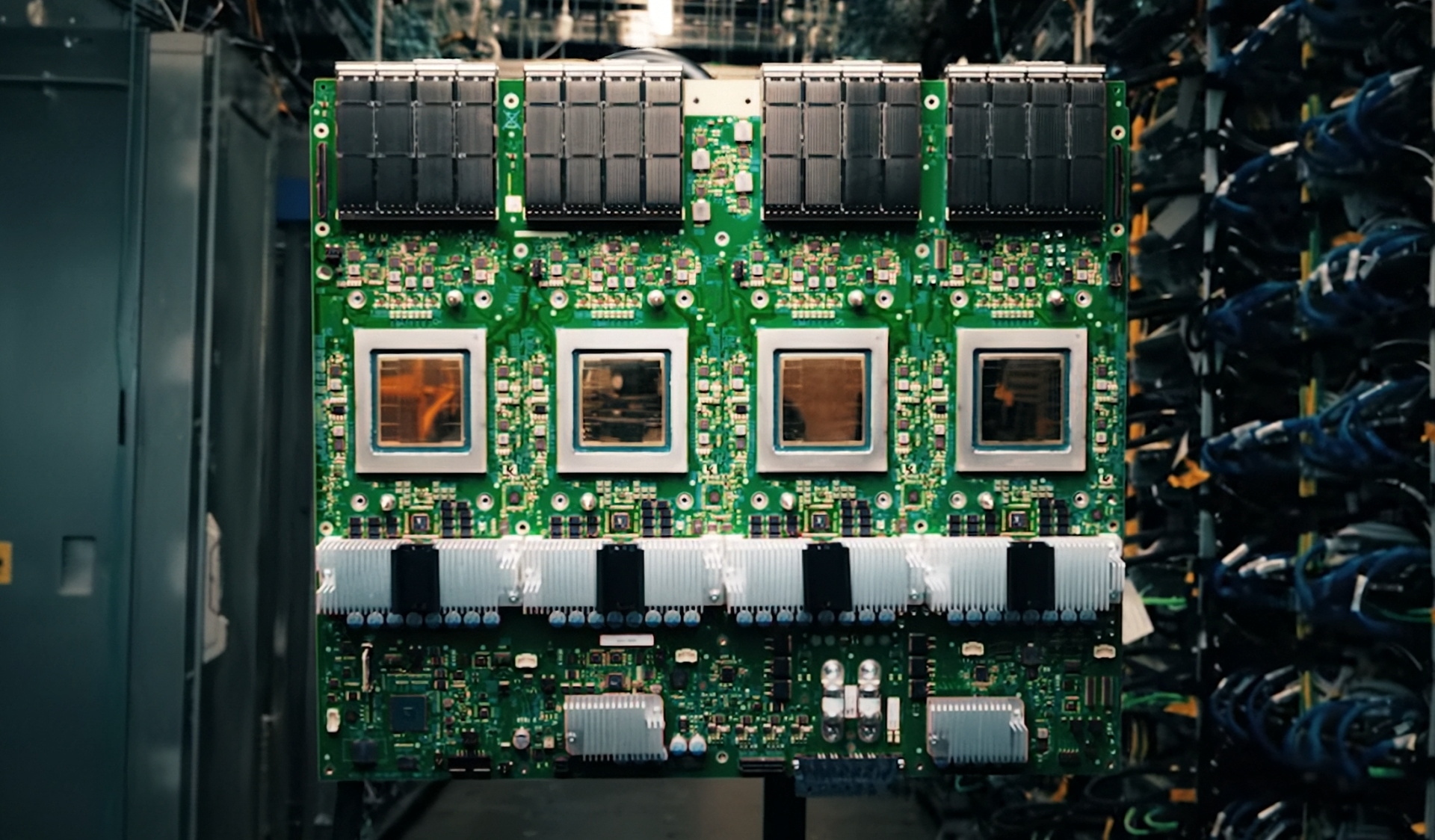

For teams pushing the boundaries of generative AI, the line between a high-performance workstation and a data-center node has blurred. The XpertStation WS300 from MSI, leveraging NVIDIA’s DGX Station architecture, positions itself as a deskside powerhouse capable of handling trillion-parameter models without relying on cloud infrastructure.

The system integrates the GB300 Grace Blackwell Ultra Desktop Superchip, combining CPU and GPU compute under a single roof. This design allows for seamless data sharing between components, critical for large-scale model training and fine-tuning. With up to 748 GB of coherent memory—mixing HBM3e GPU memory with LPDDR5X CPU memory—the platform avoids the bottlenecks that often plague multi-chip systems.

Performance at Desk Height

The XpertStation WS300 is not just about raw numbers. Dual 400GbE connectivity, powered by NVIDIA’s ConnectX-8 SuperNIC, provides up to 800 Gb/s of aggregate bandwidth, enabling multi-node scalability and distributed AI workloads. This is a significant leap from traditional workstations, where networking often becomes the limiting factor in collaborative or large-scale AI projects.

Storage is another area where MSI has pushed boundaries. PCIe Gen 5 and Gen 6 NVMe slots ensure that data pipelines remain unclogged during intensive training sessions. When paired with NVIDIA’s full AI software stack, the system offers an end-to-end solution for development, deployment, and even real-time inference—all from a single deskside unit.

Key Specifications

- Chip: NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip

- Memory: Up to 748 GB coherent memory (HBM3e + LPDDR5X)

- Networking: Dual 400GbE (NVIDIA ConnectX-8 SuperNIC, 800 Gb/s aggregate bandwidth)

- Storage: PCIe Gen 5 and Gen 6 NVMe support

- Connectivity: PCIe 5.0/6.0, dual 400GbE ports

This combination of specifications allows the XpertStation WS300 to handle workloads that traditionally required server racks. For example, running trillion-parameter models locally with up to 20 petaFLOPS of AI compute is now feasible without cloud dependencies. However, the system’s compact form factor means it won’t match the raw scalability of a full data-center deployment.

Balancing Local and Distributed Workflows

The platform is designed to support the entire AI lifecycle, from model training to real-time inference. It can function as a centralized node for collaborative fine-tuning or on-demand deployment, giving teams more control over proprietary data while maintaining infrastructure-level consistency.

NVIDIA’s NemoClaw stack, which includes OpenShell runtime with policy-controlled sandboxing, further extends the system’s capabilities. This allows autonomous AI agents to operate continuously at the deskside, a feature that could redefine how local AI development is approached. But whether this level of autonomy will translate into practical workflow improvements remains to be seen.

Where It Fits (and Where It Doesn’t)

The XpertStation WS300 is aimed at organizations looking to move AI projects from experimentation to production without the overhead of a full data-center setup. It’s not a replacement for cloud infrastructure but rather a bridge, offering the performance needed for local development while maintaining compatibility with distributed environments.

For gamers or enthusiasts, this system may seem overkill—its strengths lie in AI workloads, not real-time rendering. But for teams working on large language models or generative AI, it represents a significant step toward decentralized, high-performance computing. The tradeoff is cost and power consumption; such capabilities come with a premium.

Availability and pricing have not been confirmed, but given the system’s architecture, it’s likely to be positioned as a high-end enterprise solution rather than a consumer product.