Intel’s CEO has revealed a high-stakes partnership with NVIDIA that could redefine how AI workloads run on x86 servers. While the tech giant has been aggressively pushing its in-house Arc graphics for consumer chips, behind the scenes, it’s quietly integrating NVIDIA’s NVLink technology into a custom Xeon design. The move suggests Intel is doubling down on enterprise AI performance, but critical questions about architecture, timing, and impact remain unanswered.

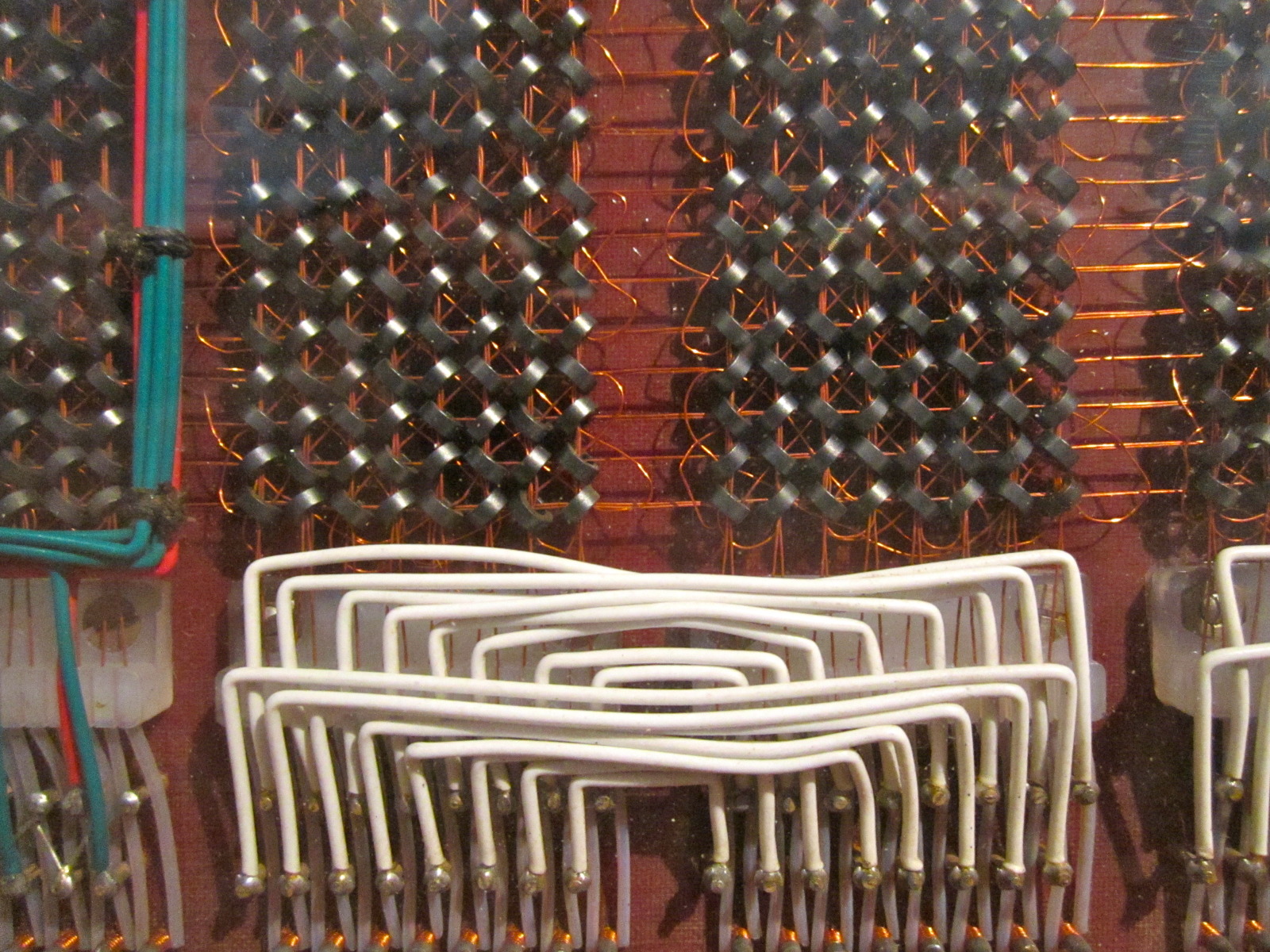

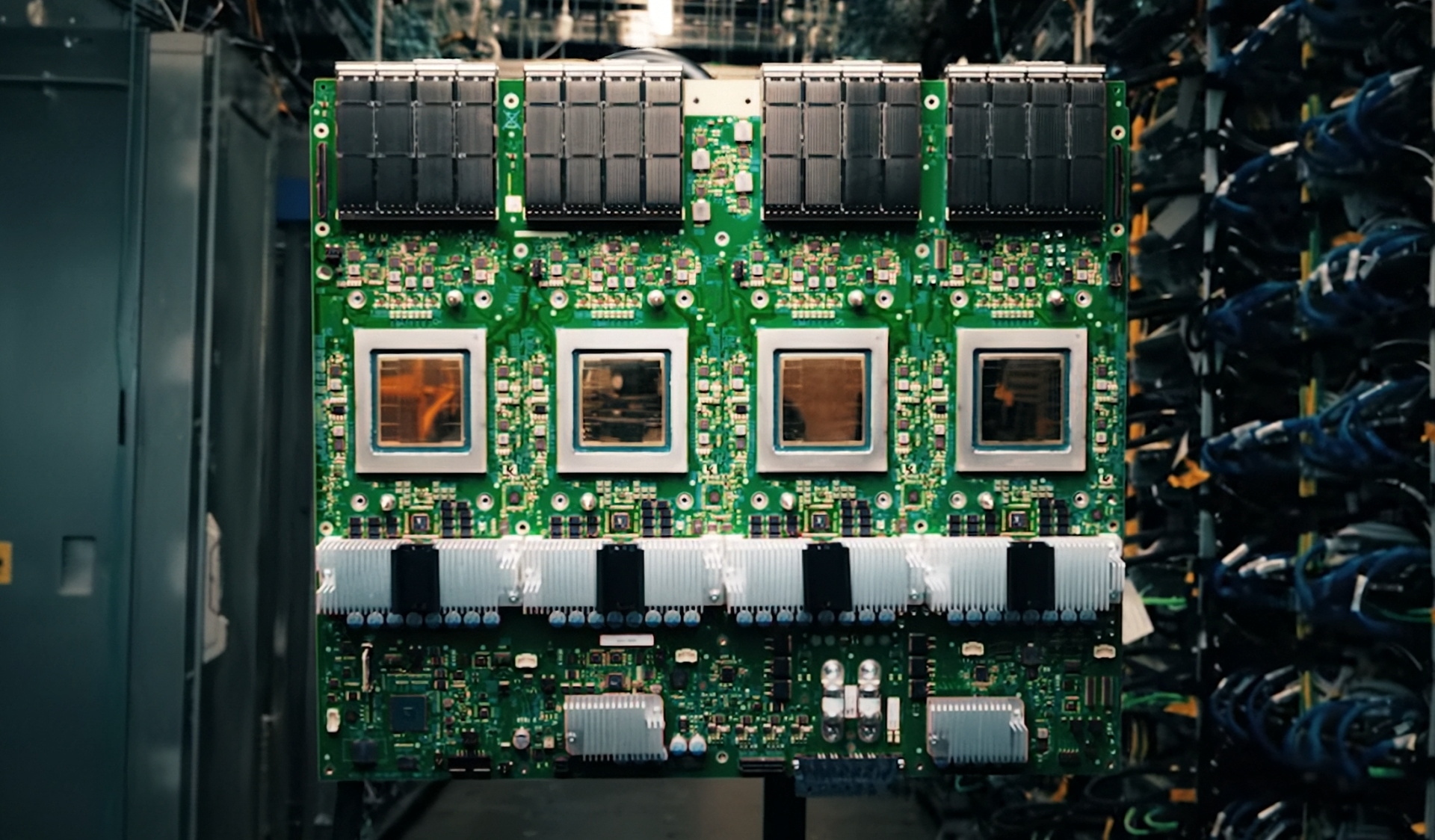

The announcement, buried in a recent earnings call, hints at a processor optimized for AI host nodes—where NVIDIA’s NVLink, typically used to connect GPUs for ultra-low-latency communication, would now be embedded directly into the CPU. This is a departure from traditional server designs, where NVLink requires separate GPU connections. If successful, it could streamline AI training clusters by eliminating bottlenecks between host CPUs and accelerator GPUs.

For enterprises, the integration of NVLink into a Xeon could mean faster data transfer between CPUs and GPUs, reducing the overhead in distributed AI training. NVIDIA’s NVLink has long been a cornerstone of its high-performance computing (HPC) and AI ecosystems, offering bandwidth up to 300 GB/s between GPUs. By embedding this technology into a Xeon, Intel may be attempting to compete more directly with NVIDIA’s own CPU-GPU ecosystems, such as its CGX platforms.

However, the partnership raises several unanswered questions. The most pressing is whether this custom Xeon will leverage Intel’s upcoming Coral Rapids architecture, slated to reintroduce Hyper-Threading for datacenter workloads. If so, the processor could benefit from improved multi-threaded performance, but it’s unclear how NVLink integration will interact with Intel’s existing memory and I/O subsystems. Additionally, the timeline for this processor remains fluid—rumors previously suggested a 2027 launch, but Intel has yet to confirm.

Potential Trade-Offs

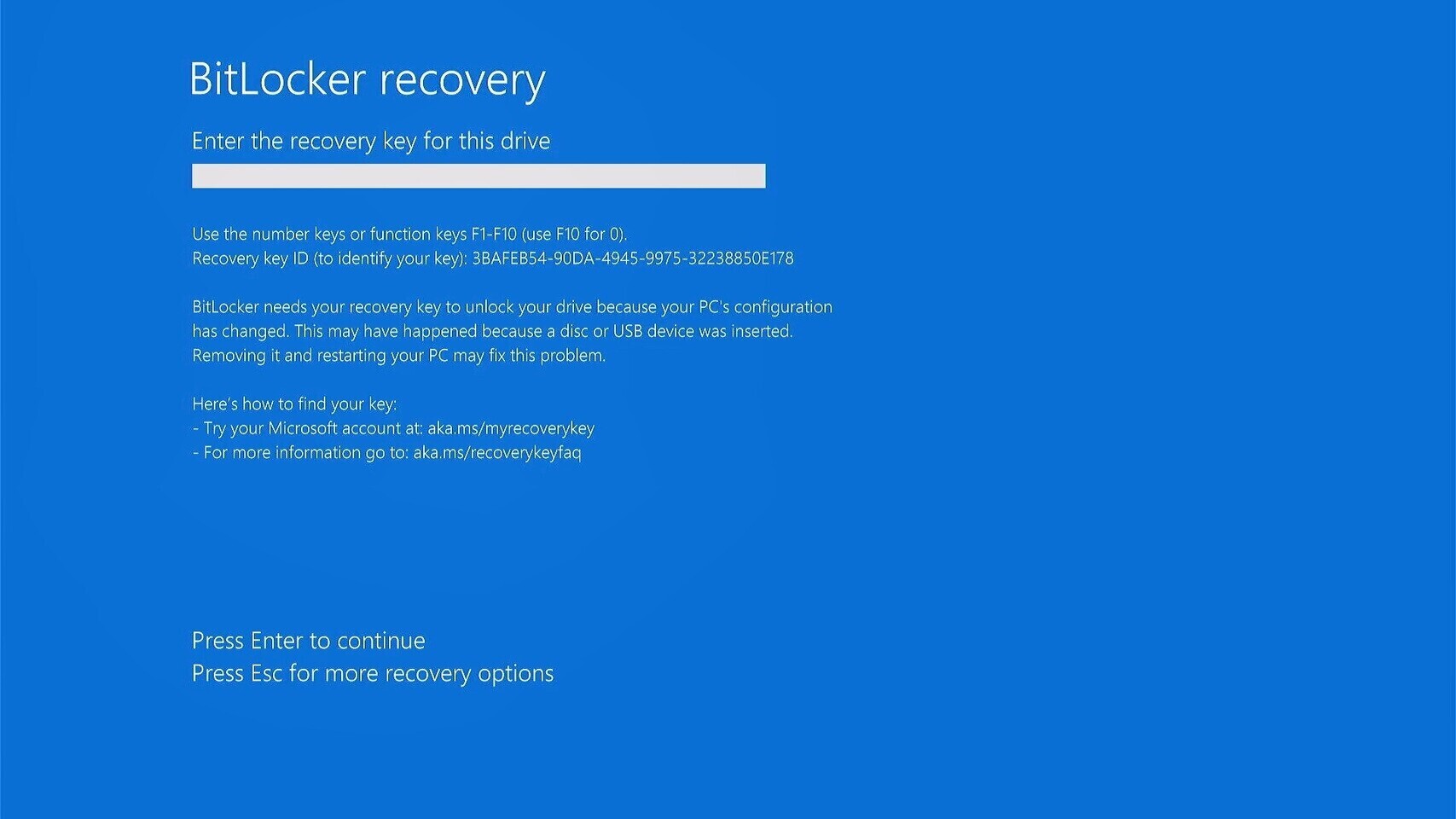

The move also introduces technical complexities. NVLink’s primary strength lies in its ability to connect multiple GPUs with minimal latency, but integrating it into a CPU requires significant redesigns. Intel would need to ensure backward compatibility with existing server motherboards and cooling solutions, which could delay adoption. Furthermore, while NVLink accelerates GPU-to-GPU communication, its role within a CPU remains untested at scale. Early adopters may face compatibility challenges, particularly if the custom Xeon requires proprietary hardware or software adjustments.

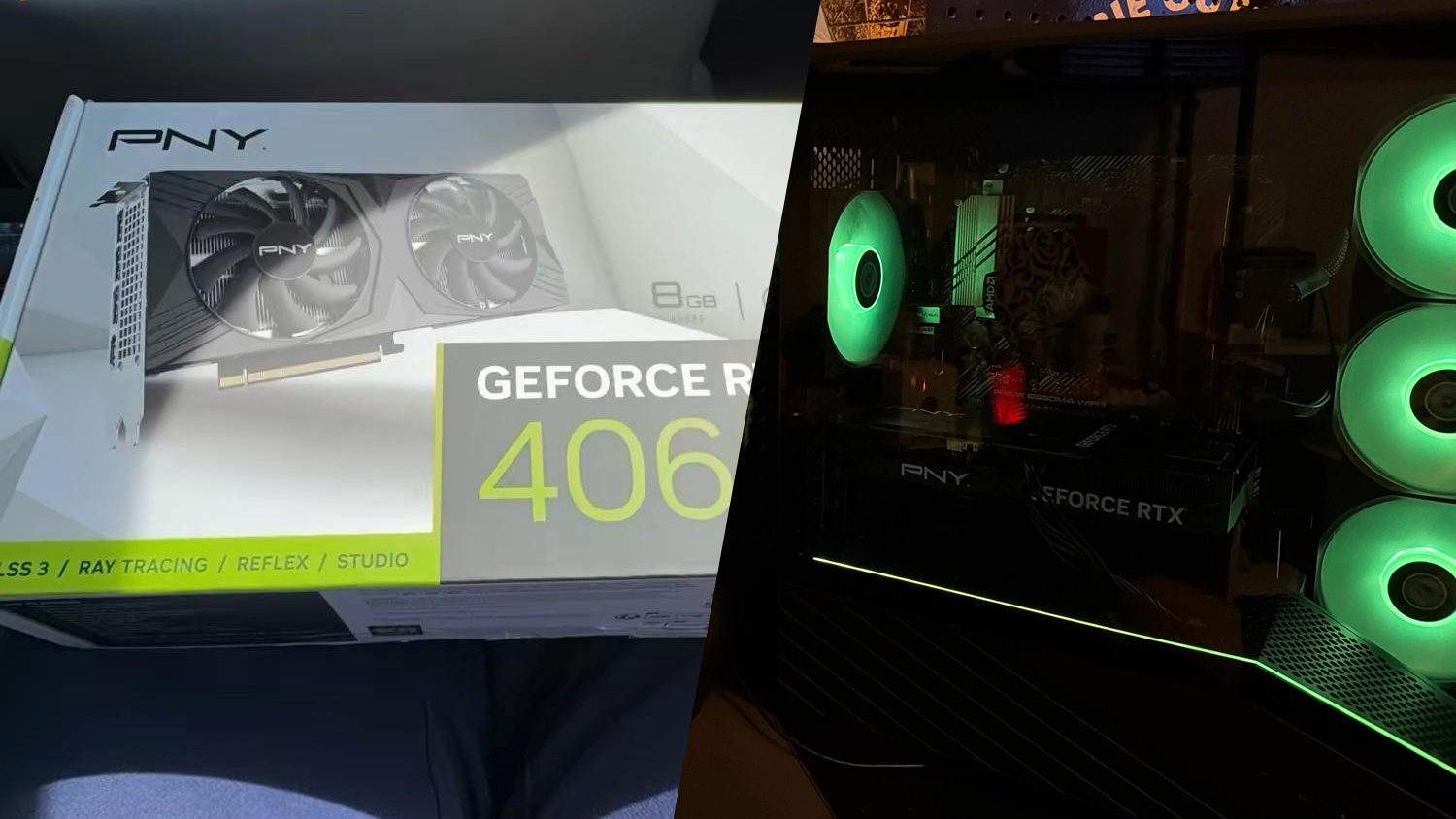

Another consideration is Intel’s broader strategy. The company has been investing heavily in its Core Ultra 300 series for consumer and mobile markets, which includes integrated graphics based on its Arc architecture. However, the Xeon-NVLink collaboration suggests a bifurcated approach—one where Intel is simultaneously pushing its own graphics solutions while leveraging NVIDIA’s strengths in AI acceleration. This dual strategy could confuse customers, particularly in the enterprise space, where standardization is critical.

Intel has not provided specifics on the custom Xeon’s architecture, performance benchmarks, or release date. The focus for now appears to be on accelerating the Coral Rapids series, which is expected to bring Hyper-Threading back to Xeon processors after a decade-long absence. If the NVLink-integrated Xeon follows the Coral Rapids roadmap, it could arrive as early as mid-2027, though industry insiders caution that timelines in chip development are rarely precise.

For NVIDIA, the partnership reinforces its dominance in AI infrastructure. By embedding NVLink into a Xeon, Intel may be acknowledging the limitations of its own in-house solutions for high-performance computing. The collaboration could also pressure AMD, which has been expanding its EPYC CPU and Instinct GPU lineup to compete in AI workloads. If Intel’s custom Xeon delivers on its promise, it may force AMD to rethink its own integration strategies.

Ultimately, the success of this venture hinges on execution. Intel’s ability to balance NVLink integration with its existing server ecosystem will determine whether this becomes a game-changer for AI or just another footnote in the chip wars. One thing is certain: the enterprise AI market is evolving rapidly, and Intel’s move signals a willingness to adapt—even if the details are still coming into focus.