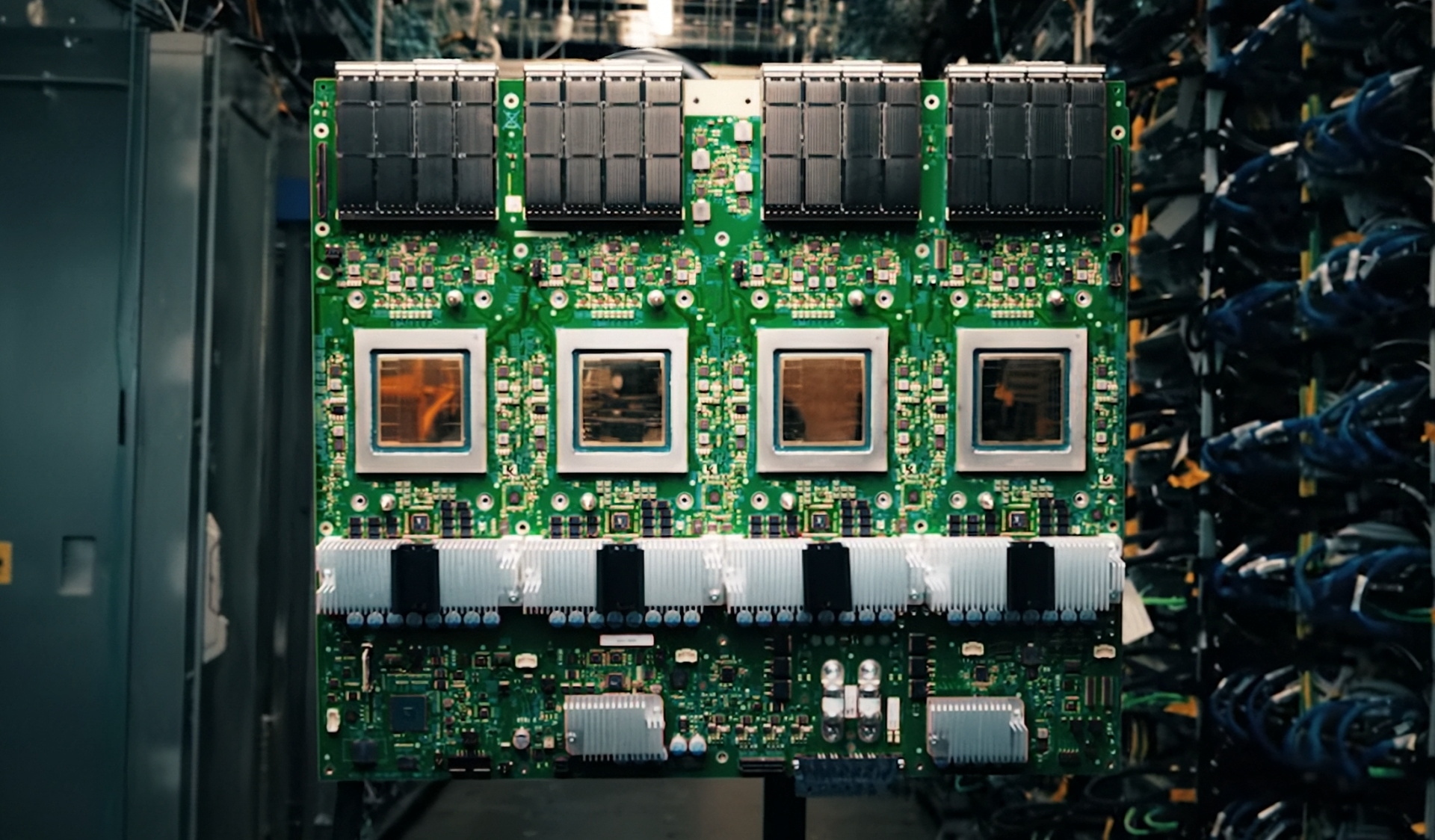

Intel has unveiled a strategic shift in its data center and client CPU roadmaps, placing a heavy emphasis on AI-driven performance while forging a close partnership with NVIDIA. The collaboration will result in a custom Xeon CPU equipped with NVLink technology, a move intended to bolster x86-based AI host nodes and compete directly with NVIDIA’s own GPU-centric architectures.

The announcement comes amid Intel’s broader push to reclaim market dominance in both server and client segments. The company’s latest financial report—revealing $13 billion in revenue for Q4 2025—highlighted ongoing supply constraints but also signaled a renewed focus on high-performance, multi-threaded solutions. CEO Lip-Bu Tan emphasized a simplified product strategy, prioritizing 16-channel Diamond Rapids Xeon processors over previous 8-channel variants, a decision framed as a commitment to maximizing performance for hyperscale AI deployments.

Diamond Rapids, set to debut in 2026, will mark Intel’s first major foray into a 16-channel memory configuration, aligning with the demands of next-generation AI workloads. Following Diamond Rapids, Coral Rapids will reintroduce simultaneous multithreading (SMT), a feature absent since the Xeon E7 series, to restore core/thread leadership in the data center space. The integration of NVLink into Intel’s custom Xeon design is particularly notable, as it allows NVIDIA’s Blackwell and Rubin GPUs to interface more efficiently with Intel’s x86 architecture, potentially opening new avenues for AI training and inference clusters.

On the client side, Intel’s recent launch of Core Ultra Series 3 (Panther Lake) has set the stage for broader AI adoption in consumer devices, with over 200 notebook designs already announced by OEM partners. However, the company’s sights are firmly set on Nova Lake, its next-generation platform slated for Q4 2026. Nova Lake is positioned as a cost-optimized yet high-performance solution for desktops and notebooks, aiming to deliver over 20% performance improvements compared to its predecessor, the Xe3 series. This focus on efficiency and scalability suggests Intel is preparing for a two-pronged offensive: regaining server market share through AI-optimized Xeon chips while expanding its client footprint with Nova Lake’s balanced performance.

The realignment of Intel’s roadmap reflects a deliberate pivot toward high-end, AI-centric workloads, where multi-threaded, high-channel CPUs are becoming the standard. By eliminating the 8-channel Diamond Rapids variant and accelerating Coral Rapids’ introduction, Intel is consolidating its resources on platforms that can compete with AMD’s EPYC and NVIDIA’s GPU-driven ecosystems. The NVLink-integrated Xeon represents a bold step toward bridging the gap between x86 and GPU architectures, potentially reshaping how AI clusters are designed and deployed.

For hyperscalers and enterprise customers, the implications are significant. The custom Xeon with NVLink could simplify AI infrastructure by reducing the need for separate host and accelerator nodes, while Diamond Rapids and Coral Rapids aim to deliver the raw performance required for large-scale machine learning. Meanwhile, Nova Lake’s arrival in late 2026 suggests Intel is betting on a sustained push into the AI PC market, where cost efficiency and performance scalability will be critical differentiators.

With Panther Lake already driving adoption in over 200 notebook designs, Intel’s client strategy appears to be gaining traction. However, the real test will come with Nova Lake, which must deliver on its promises of performance and cost-effectiveness to secure long-term dominance in both desktops and laptops. The partnership with NVIDIA, while still in development, signals a broader industry trend toward tighter integration between CPUs and GPUs—a move that could redefine the landscape for AI and high-performance computing.