At the heart of modern data centers lies a growing tension: the need for both general-purpose compute power and specialized acceleration to handle the exploding demand for AI workloads. Intel and Google are now doubling down on an approach that combines Xeon processors with custom infrastructure processing units (IPUs) to address this challenge, promising more efficient and scalable AI systems.

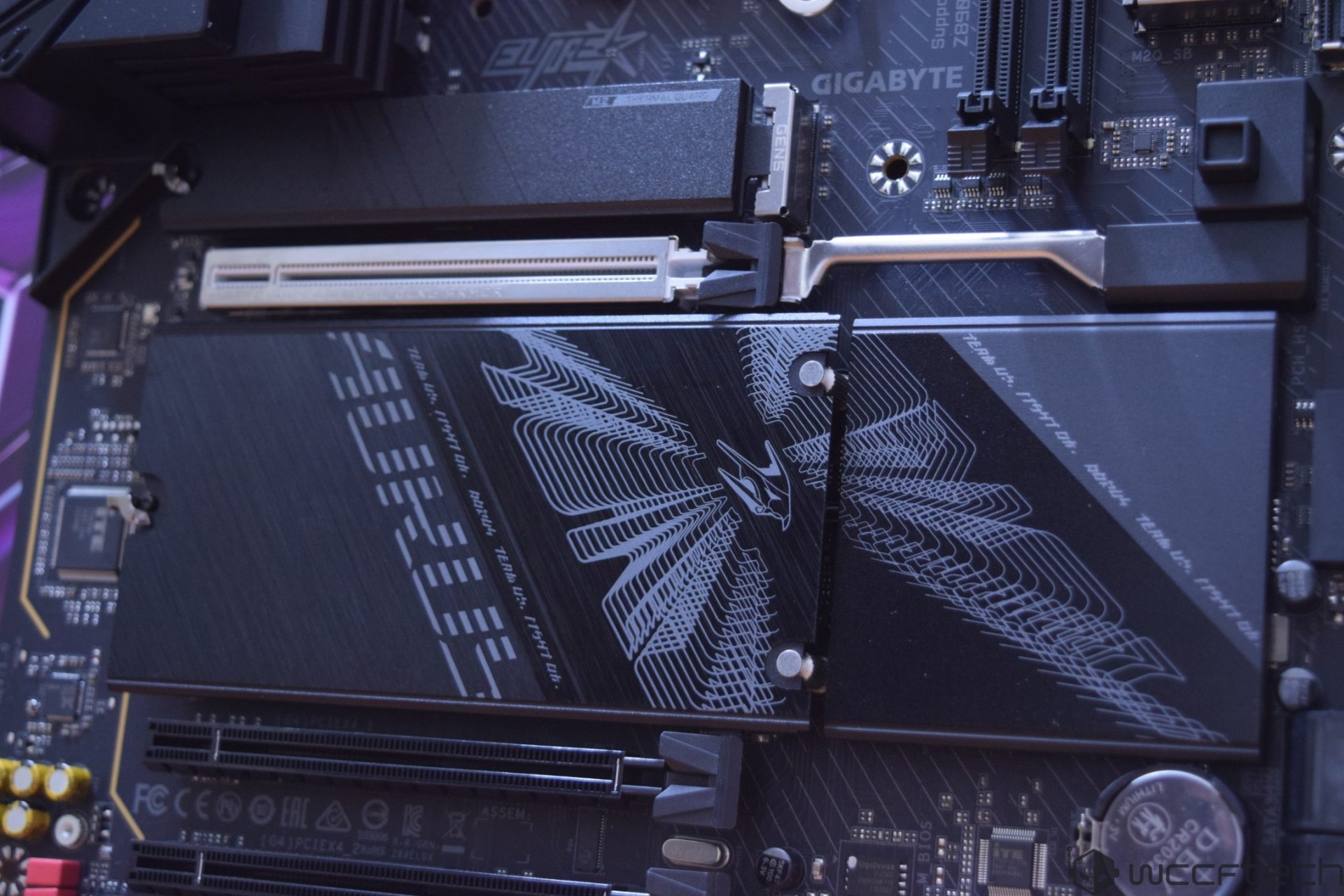

The partnership, announced recently, will see the two tech giants collaborate across multiple generations of Intel Xeon processors, including the latest Intel Xeon 6 series. These chips already power Google’s C4 and N4 instances, which support everything from large-scale AI training to latency-sensitive inference tasks. But the collaboration doesn’t stop at CPUs—it extends into the development of custom ASIC-based IPUs designed to offload networking, storage, and security functions from host processors.

Why it matters: While accelerators like GPUs have dominated headlines in AI, the reality is that modern systems rely on a mix of components. Xeon CPUs handle orchestration and data processing, while IPUs take on infrastructure tasks, allowing for better utilization and more predictable performance. This balanced approach could be key to scaling AI efficiently without adding complexity.

What’s in it for users?

The immediate impact for cloud customers is likely to be improved total cost of ownership, thanks to better energy efficiency and system utilization. For power users running workload-optimized instances on Google Cloud, this could translate into faster training cycles or more responsive inference tasks. However, the full benefits will depend on how quickly Intel and Google can integrate these new IPUs into production environments.

Looking ahead

The collaboration suggests a shift toward more heterogeneous AI systems, where CPUs, accelerators, and IPUs work in tandem. While this won’t overnight replace specialized hardware like GPUs, it could redefine how data centers are architected for the next wave of AI workloads—one where efficiency is as critical as raw performance.