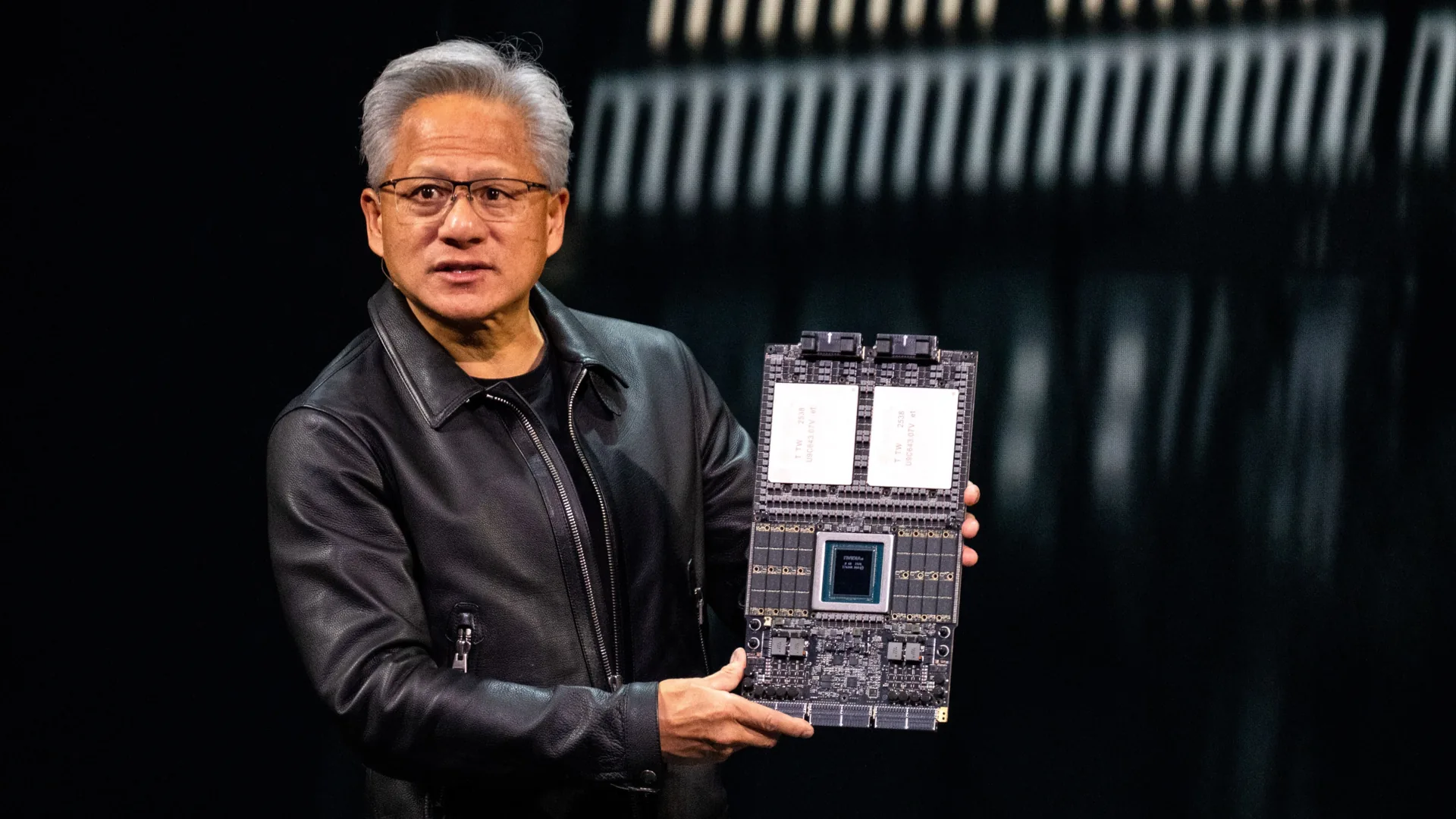

The memory industry is undergoing a seismic shift as HBM4 production ramps up, and the balance of power is tilting sharply toward Samsung and SK hynix—leaving Micron on the sidelines for the foreseeable future. Nvidia’s upcoming Vera Rubin AI accelerator and Instinct MI400 series will rely almost exclusively on HBM4, a technology that integrates logic and memory into a single package for unparalleled performance. But while this advancement promises major efficiency gains, it also exposes a critical vulnerability in Micron’s strategy.

Micron’s internal HBM4 base die—designed in-house to reduce costs and tighten supply chain control—has encountered significant validation challenges. Issues with pin speeds, thermal management, and foundry precision have stalled progress, forcing a redesign of the base die and adjustments to power delivery networks (PDN) and physical layer (PHY) elements. With Nvidia’s Rubin platform already in full production, Micron’s timeline for qualification has slipped into Q2 2026, handing Samsung and SK hynix a commanding lead.

The New Market Order

Samsung has emerged as the first to meet Nvidia’s stringent pin speed requirements for HBM4, securing an estimated 20–30% of the market share for Rubin and MI400. SK hynix, meanwhile, is expected to dominate with over 50% of the supply, further consolidating Korea’s grip on the HBM4 ecosystem. This shift isn’t just about market share—it’s a strategic realignment. Samsung, which lagged behind with HBM3, is now making a aggressive comeback, while SK hynix leverages partnerships with TSMC to optimize logic layers, avoiding the pitfalls Micron faced by going it alone.

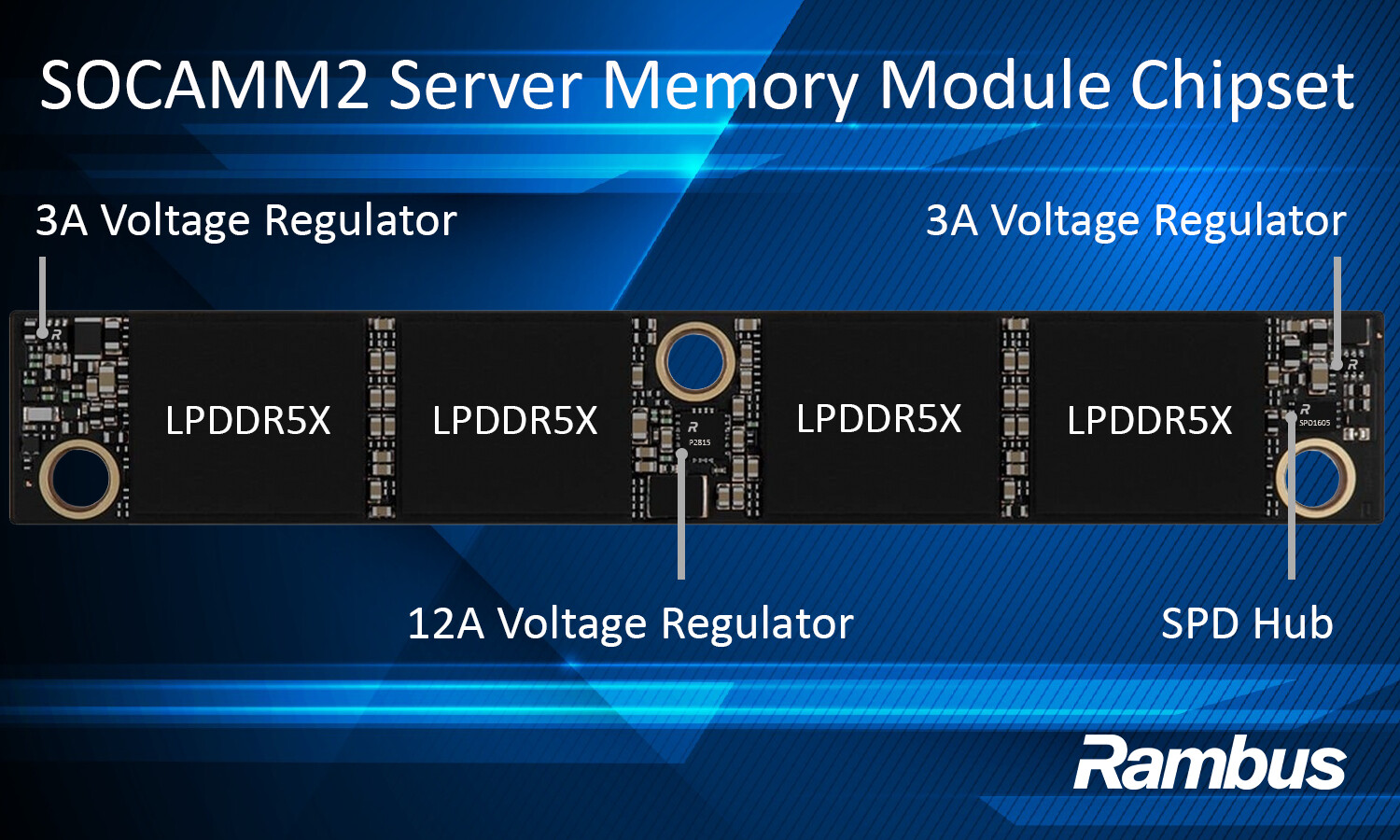

Micron isn’t disappearing entirely. Its general-purpose DRAM remains in high demand, particularly for SOCAMM (System-on-Chip Advanced Memory Modules) applications. But in the high-performance HBM segment, the company’s absence could have ripple effects. Without Micron’s participation, Samsung and SK hynix will control the majority of Nvidia’s HBM4 supply chain, reinforcing their dominance in AI and data center memory markets.

Why This Matters for AI Hardware

The implications for AI accelerators like Rubin are profound. HBM4’s tighter integration and higher bandwidth—up to 11.7 Gbps in SK hynix’s latest modules—enable significant performance uplifts. For Nvidia, this means platforms capable of 50 PFLOPS of compute power, a fivefold improvement over its Blackwell architecture. But the reliance on a single supplier base introduces new risks: delays, supply constraints, or geopolitical tensions could disrupt production timelines.

For buyers, the shift may translate to tighter margins for high-end AI systems, as Samsung and SK hynix consolidate pricing power. Meanwhile, Micron’s struggles could accelerate its push into alternative memory technologies, such as LPDDR6 for mobile and edge devices, where it maintains a stronger foothold.

Key Specs: HBM4 in the AI Era

- Bandwidth: Up to 11.7 Gbps (SK hynix HBM4 modules)

- Memory Type: HBM4 (combines logic and DRAM in a single package)

- Supply Chain: Samsung (20–30% share), SK hynix (>50% share), Micron (0% for now)

- Validation Timeline: Micron’s HBM4 qualification delayed to Q2 2026; Samsung and SK hynix already supplying Rubin/MI400

- Thermal/Pin Challenges: Micron’s in-house base die faces thermal and speed limitations compared to TSMC/Samsung logic partnerships

- Alternate Focus: Micron shifting resources to LPDDR6, SOCAMM, and general-purpose DRAM

This isn’t just a technical upgrade—it’s a turning point. The memory industry’s future hinges on whether Micron can overcome its validation hurdles or if Samsung and SK hynix will solidify their duopoly. For AI developers and data center operators, the stakes couldn’t be higher: the choice of memory supplier will dictate performance, cost, and scalability for years to come.