Google has quietly expanded its Gemini AI to interpret and analyze visual content directly from a user’s screen, marking a shift toward more interactive AI assistance. The feature, available for free, allows users to share live views of applications, browser tabs, or even webcam footage—then ask the AI to describe, explain, or troubleshoot what’s displayed.

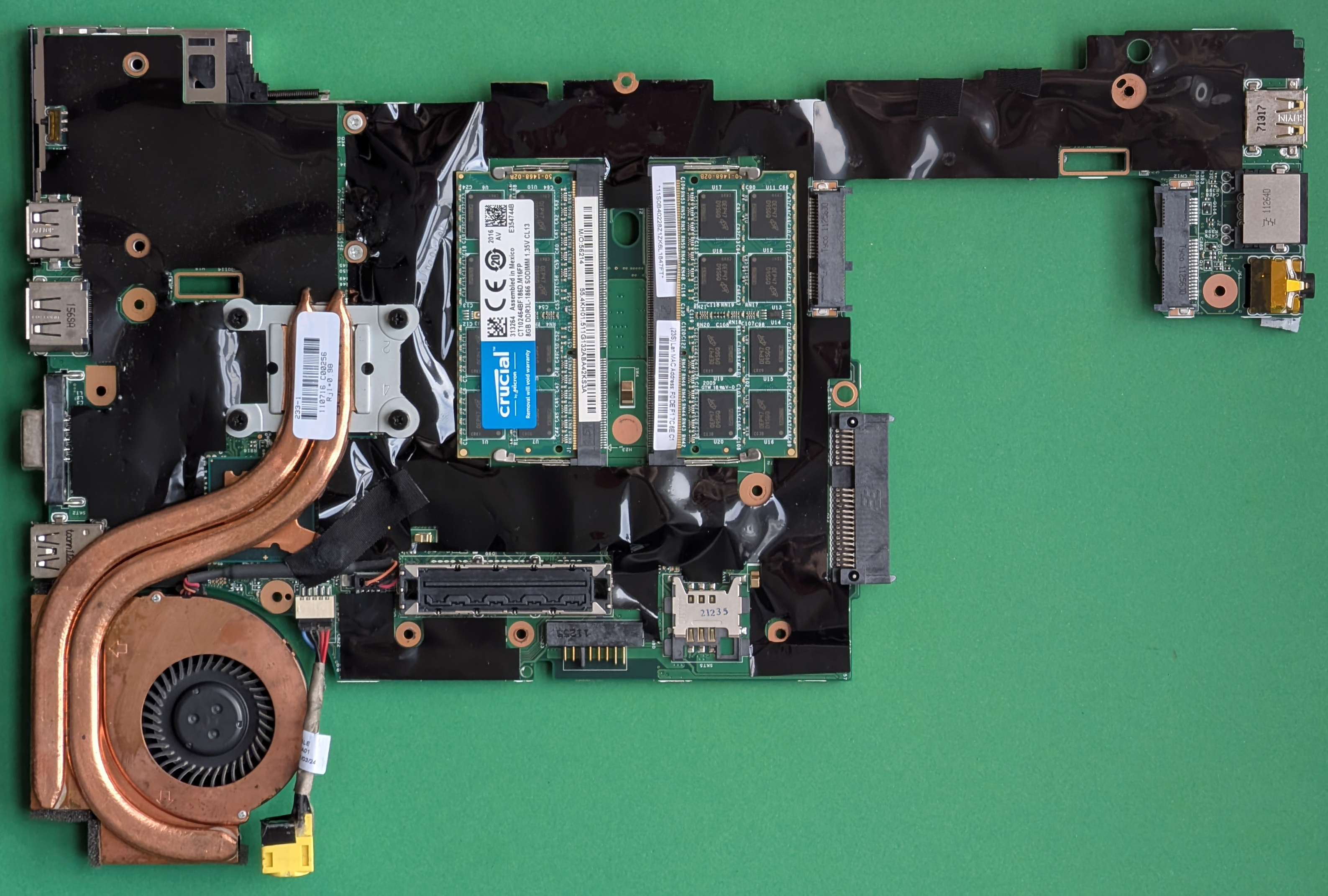

For example, a user could open a complex spreadsheet, share their screen via Gemini’s ‘Stream’ tool, and ask, What’s the average of column C?—with the AI parsing the data in real time. The same applies to images: upload a screenshot or point your webcam at a physical document, and Gemini will transcribe or summarize its contents.

This isn’t just for static files. Users can stream a video clip, and the AI will provide a live breakdown of key moments, objects, or even text overlays. The tool also integrates with creative software; a designer could share a Photoshop layer panel and ask Gemini to suggest color adjustments or layout improvements.

The how-to: Sharing your screen with Gemini

To use the feature, visit aistudio.google.com and sign in with a Google account. On the left sidebar, select ‘Stream’, then choose between ‘Share Screen’ or ‘Webcam’. For screen sharing, a prompt appears to select a specific window or tab—critical for privacy, as the AI only sees what you explicitly share. Click ‘Share’, and a voice or text prompt lets you interact with the content. End the session by closing the ‘Stream is Live’ window.

Voice input is seamless, but text works as a fallback—though typing while describing a dynamic screen (like a video) is cumbersome. Webcam access requires a one-time browser permission, after which Gemini can analyze live or static images, such as a handwritten note or a product label.

Why this matters

Before this update, AI tools like Gemini required users to manually describe or upload content. Now, the barrier drops for tasks like

- Debugging code by sharing an IDE window and asking for syntax fixes.

- Translating on-screen text from foreign-language documents without copying.

- Getting instant summaries of research papers or reports displayed in a PDF viewer.

- Receiving step-by-step guidance for complex software (e.g., How do I animate this layer in After Effects?).

The feature’s free tier positions it as a competitor to paid tools like Microsoft Copilot, which offers similar screen analysis for Office users. However, Gemini’s broader compatibility—supporting third-party apps and web content—could appeal to power users and creatives. Privacy remains a consideration: users must manually end sessions, and Google’s terms prohibit sharing sensitive data (e.g., passwords or private conversations).

For now, the tool is in active development, with Google likely refining its accuracy for edge cases like low-resolution images or fast-moving video. But the core vision is clear: AI that doesn’t just listen to you, but sees what you see—and helps you act on it instantly.