How quickly can a coding AI tool go from launch to 1 million downloads? For OpenAI’s Codex app, the answer is seven days—a pace that mirrors the explosive debut of ChatGPT but with a critical difference: this tool is built for developers who demand more than just autocomplete. While the milestone is a testament to its utility, it also signals a turning point in how OpenAI manages access, especially as competitors introduce alternatives that prioritize flexibility over ecosystem lock-in.

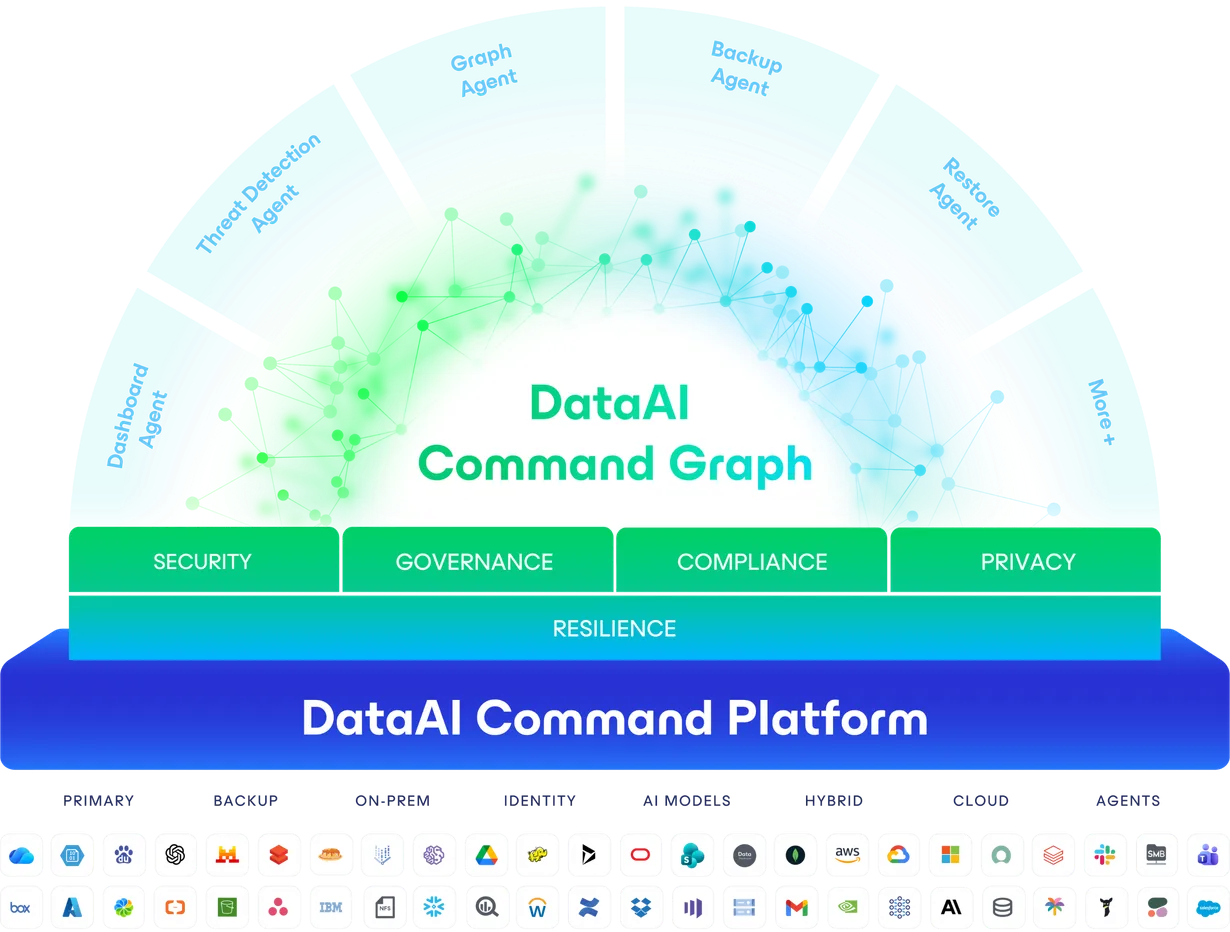

What’s driving the rush? The app’s ability to orchestrate multiple AI agents simultaneously, a feature that turns it into a command center for complex workflows. Unlike traditional assistants that handle one task at a time, Codex allows developers to spin up parallel agents—each tackling different code paths, debugging sessions, or maintenance tasks—without stepping on each other’s toes. This isn’t just about speeding up coding; it’s about redefining how teams collaborate with AI.

The app’s core is powered by GPT-5.3-Codex, a model that has already proven its mettle by debugging its own training pipeline. Benchmarks show it achieving a 77.3% success rate on Terminal-Bench 2.0, a rigorous test of agentic performance in terminal environments. For developers who rely on command-line tools, this level of autonomy is a game-changer.

Why is free access likely to change? OpenAI’s decision to extend Codex to Free and Go-tier users was a temporary boost, but the company has made it clear this isn’t a permanent arrangement. Paid subscribers already enjoy doubled rate limits, but the free tier’s access is expected to shrink once the promotion ends. This shift isn’t just about monetization—it’s a reflection of the massive computational costs behind running high-capability models at scale.

What does this mean for developers? The upcoming restrictions could force a reevaluation of how AI tools fit into workflows. Those accustomed to unlimited access may need to adjust, whether by upgrading subscriptions or exploring open-source alternatives. Enterprises, in particular, will need to weigh the risks of vendor lock-in against the efficiency gains these tools offer.

How parallel AI agents could redefine development workflows

What sets Codex apart from other coding assistants is its ability to manage multiple AI agents in real time. This isn’t just a single bot suggesting fixes—it’s an entire orchestration system where each agent can

- Run independent code explorations without conflicts, allowing teams to test multiple approaches simultaneously.

- Handle background tasks like dependency updates or test execution, freeing developers from manual oversight.

- Maintain a unified project context across agents, ensuring no critical details are lost when switching between tasks.

For teams working on large-scale projects, this could translate to fewer bottlenecks and faster iteration. But the real question is whether OpenAI can scale this capability without creating new dependencies for users.

Who stands to gain—or lose—as free access tightens?

Who benefits most from Codex’s current free access? Likely, it’s individual developers and small teams testing AI-assisted workflows for the first time. For them, the app’s ability to handle complex tasks autonomously is a major draw. But as restrictions come into play, those without paid plans may face slower processing speeds or limited agent usage—a trade-off that could push them toward competitors.

Who might pivot to alternatives? Enterprises and power users accustomed to unlimited access could turn to tools like Kilo CLI, which supports over 500 AI models and avoids vendor lock-in. Anthropic’s Claude Code, meanwhile, has already demonstrated strong traction with $1 billion in annualized revenue within six months—a sign that OpenAI isn’t the only player with deep pockets and ambition.

What’s the biggest risk for developers? The shift toward paid tiers could create fragmentation in workflows. Teams that rely on multiple AI tools may find themselves juggling different pricing structures, APIs, and access levels—unless they adopt a model-agnostic approach from the start.

How is the competition reshaping the AI coding landscape?

What’s one major trend in the AI coding space? The rise of open-source and multi-model tools that reject ecosystem lock-in. Kilo CLI, for example, allows developers to deploy agents across terminals, Slack, or IDEs without tying them to a single provider. This flexibility is a direct response to concerns about opaque subscription costs and vendor dependencies.

What’s another key differentiator? Competitors like Claude Code are focusing on enterprise-grade reliability, with features like long-context processing and fine-tuned security controls. OpenAI’s strength has always been its consumer-friendly accessibility, but as the market matures, enterprises may prioritize tools built for governance and scalability.

What’s the long-term implication? The success of Codex could accelerate a broader shift: from AI as a copilot to AI as a full-fledged operator in development. But whether that shift happens smoothly depends on how well OpenAI balances innovation with accessibility—and how quickly competitors can adapt.

What should developers do now to prepare?

How can teams future-proof their workflows? By adopting a multi-tool strategy that includes both AI assistants and open-source alternatives. This means evaluating tools not just on capabilities, but on long-term flexibility and cost.

What’s a critical step for enterprises? Implementing governed repositories and human oversight to ensure AI tools integrate securely. The more autonomous these tools become, the more critical it is to maintain control over critical workflows.

What’s the bottom line? The 1 million download mark is a strong validation of Codex’s potential, but the real test will be how OpenAI and others adapt as free access fades. For developers, the message is clear: the AI coding revolution is here—but the tools that thrive will be the ones that evolve beyond experimentation into reliable, scalable components of modern development.