Edge AI is no longer a future promise—it’s here, but with a catch: performance often comes at the cost of power consumption and cooling complexity.

The latest attempt to solve this equation arrives in the form of ASUS’s new liquid-cooled AI pod, which integrates NVIDIA’s NVL72 GPU. The device is designed to deliver data-center-grade processing at the edge, but whether it can do so without the usual trade-offs remains an open question.

What makes this pod different isn’t just its cooling mechanism or hardware specs; it’s the way ASUS is positioning it as a bridge between two worlds: the high-efficiency demands of AI workloads and the practical constraints of edge deployments. The challenge? Balancing those demands without sacrificing scalability.

From Data Center to Edge, with Caveats

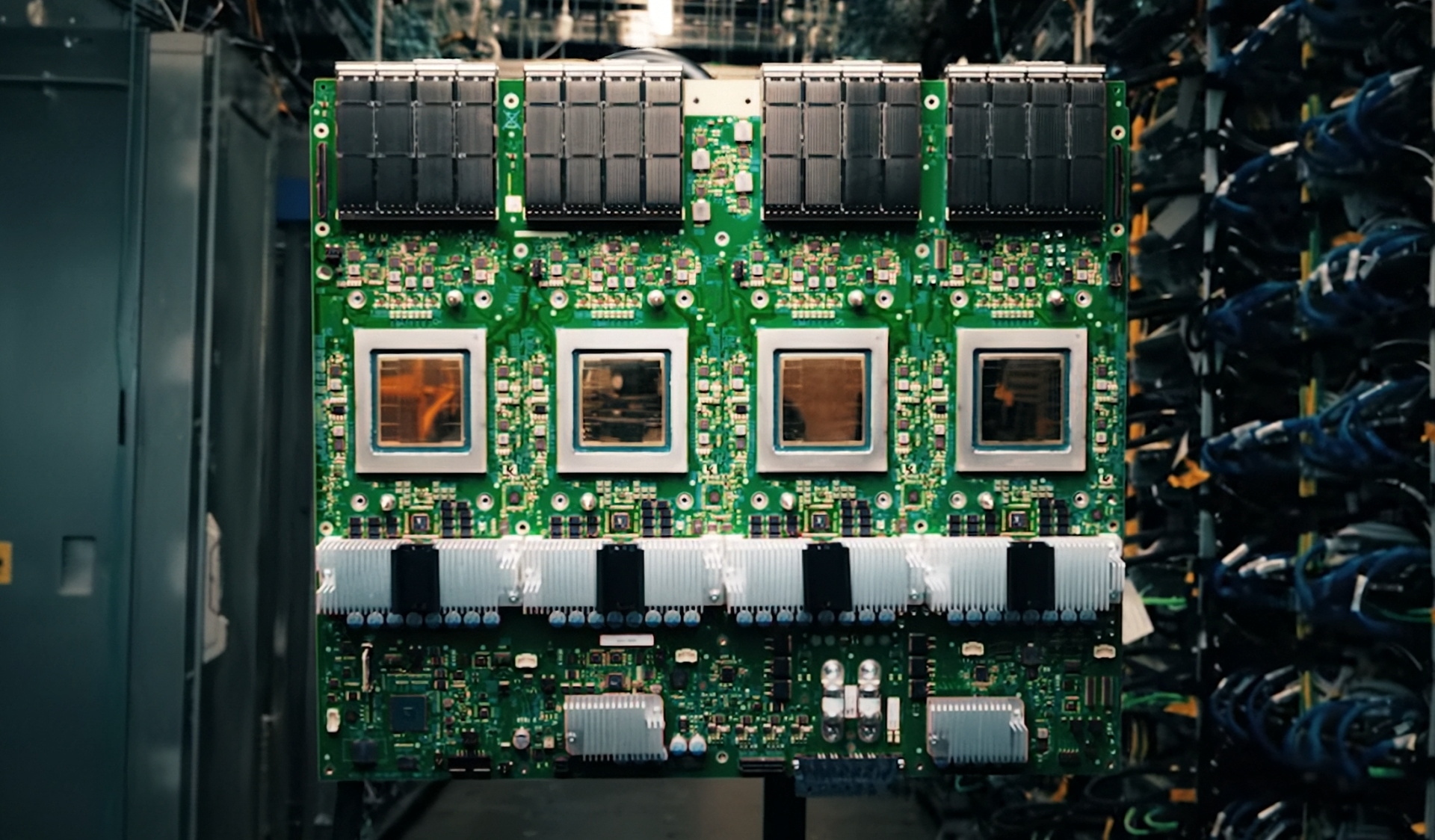

The NVL72 GPU at the heart of this pod is built on NVIDIA’s Blackwell architecture, a platform that has already shown promise in data-center scenarios. With 96GB of HBM3 memory and a TDP of up to 450 watts, it’s not a small player. But what sets it apart for edge use cases is its liquid-cooling integration—a necessity when packing such power into compact form factors.

Liquid cooling isn’t new, but its adoption in edge AI hardware has been limited. Most solutions still rely on air cooling or passive heat sinks, which struggle to match the thermal output of GPUs like the NVL72. ASUS’s approach here is to combine liquid cooling with a ruggedized design, aiming for deployments in environments where temperature and space are at a premium.

Specs That Matter—But Not Enough

The pod itself houses two NVL72 GPUs, 1TB of DDR5 memory (ECC-ready), and up to 8TB of NVMe storage. It’s not just about raw performance; the focus is on creating a self-contained unit that can handle inference workloads with minimal external infrastructure.

- Two NVIDIA NVL72 GPUs, Blackwell architecture

- 96GB HBM3 memory per GPU (ECC supported)

- 1TB DDR5 system memory (ECC-ready)

- Up to 8TB NVMe storage

- Liquid-cooled design for sustained performance

The question isn’t whether this hardware can deliver the numbers—it clearly can. The uncertainty lies in how well it adapts to edge constraints: power efficiency, form factor flexibility, and long-term reliability in non-ideal conditions.

A Reality Check on Edge AI

Edge AI has been hyped for years, but real-world adoption has lagged behind the marketing. The promise of localized processing, reduced latency, and lower bandwidth demands is undeniable, but the practical barriers—cooling, power draw, and integration complexity—have kept many solutions from taking off.

ASUS’s pod addresses some of these with its liquid-cooled design, but it also raises new questions. How scalable is this approach when compared to traditional air-cooled solutions? Can it maintain performance in dusty or high-altitude environments without additional maintenance overhead? And perhaps most critically, how does it fit into existing edge infrastructure without becoming a siloed, unsustainable investment?

What Comes Next

The market for edge AI is still evolving, but the trend is clear: more compute power, more efficiency demands, and fewer compromises. ASUS’s pod is one step in that direction, but whether it becomes a standard or remains a niche solution depends on how well it navigates those competing priorities.

For IT teams evaluating edge AI options, this pod offers a glimpse of what’s possible—but also serves as a reminder that the path to widespread adoption isn’t just about hardware. It’s about rethinking entire workflows, from power management to thermal design, and ensuring that innovation doesn’t outpace practicality.