workloads are no longer constrained by raw compute alone—they demand a software ecosystem capable of optimizing memory access, reducing latency, and scaling across distributed systems. AMD’s ROCm 7.2 release, announced today, delivers exactly that: a suite of optimizations targeting AI training and inference, from low-precision arithmetic support to topology-aware communication and fine-grained power management.

The AI Acceleration Overhaul

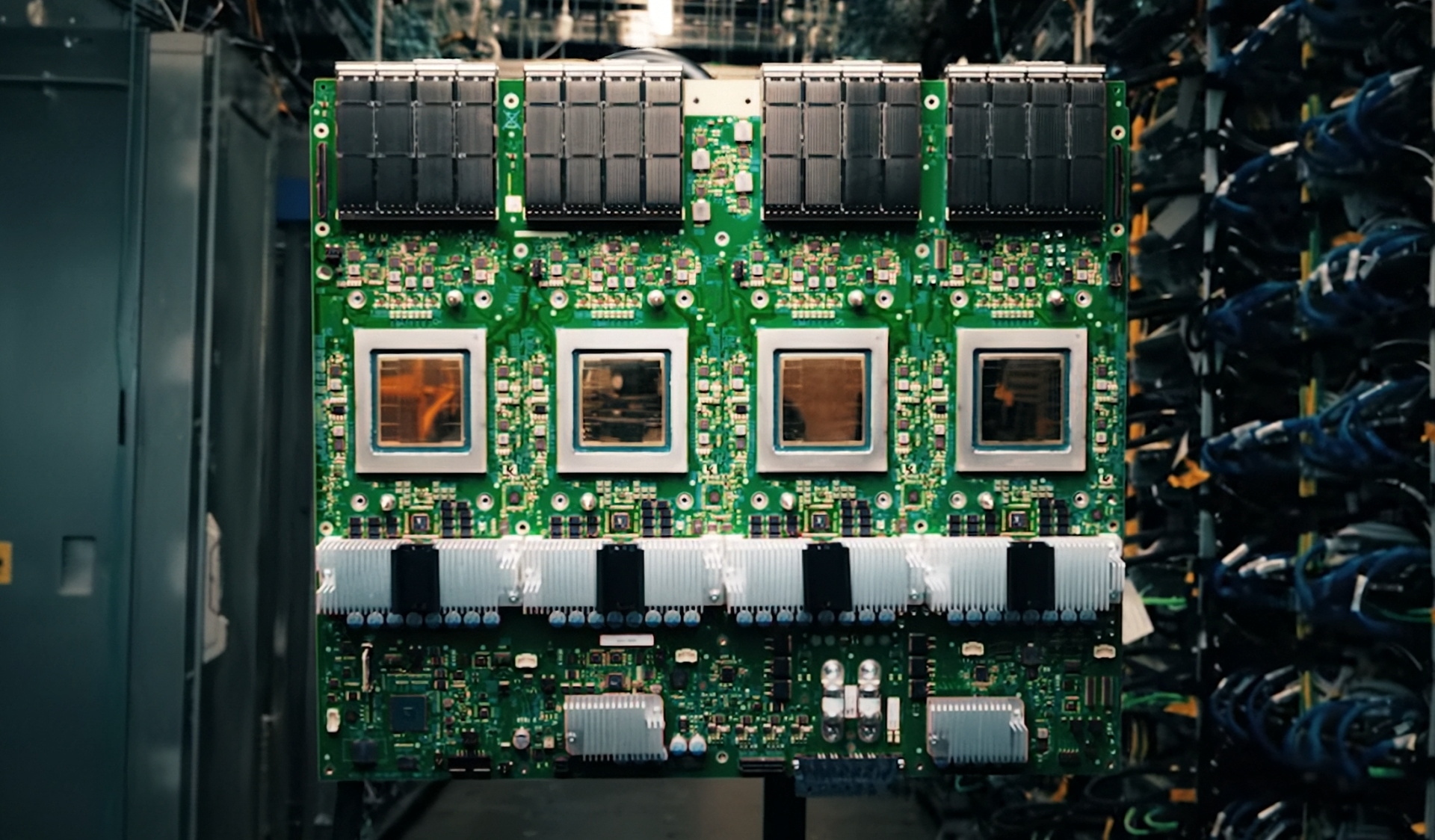

At the core of ROCm 7.2 lies a push toward efficiency in both training and inference. The introduction of FP8 and FP4 data types—now supported across the rocMLIR compiler and MIGraphX graph stack—enables developers to leverage ultra-low-precision arithmetic for emerging AI models. This isn’t just theoretical; AMD has already tuned these optimizations for real-world use cases, including Llama 3.1 405B on MI355X and MI350X GPUs, where kernel-level enhancements and memory bandwidth optimizations translate to tangible performance gains.

For developers working with GEMM-heavy workloads, hipBLASLt has received targeted improvements. Expanded tuning capabilities, a new restore-from-log feature for reproducible performance, and swizzle optimizations (A/B) reduce memory access bottlenecks. Benchmarks on MI200 and MI300 GPUs show measurable uplifts compared to ROCm 7.1, with deeper visibility into kernel selection now available through updated reporting tools.

Scalability Redefined: Topology-Aware Communication

Distributed AI training often stumbles over inefficient GPU-to-GPU communication. ROCm 7.2 addresses this with two key advancements: GPUDirect Async (GDA) support in rocSHMEM and topology-aware optimizations in RCCL. GDA eliminates CPU involvement in data transfers between GPUs or across nodes via RDMA NICs, slashing latency. Meanwhile, RCCL now intelligently distributes collective operations across multi-rail networks, minimizing contention and improving aggregate bandwidth—critical for hyperscale deployments.

These changes aren’t just incremental. AMD claims that by backporting features from NCCL 2.28, RCCL now employs more advanced collective algorithms, resulting in faster, more stable distributed training. Support for 4-NIC topologies further ensures that communication patterns align with modern data center architectures, reducing cross-rail bottlenecks.

Enterprise-Grade Reliability and Power Control

For cloud providers and enterprises, ROCm 7.2 introduces SR-IOV and RAS (Reliability, Availability, Serviceability) enhancements for MI350X and MI355X GPUs. Bad page avoidance improves GPU availability under memory faults, while security hardening—including volatile memory clearing and MMIO fuzzing protections—ensures feature parity with competing platforms. These updates are essential for multi-tenant deployments, where isolation and reliability are non-negotiable.

Power management takes a leap forward with Node Power Management (NPM), which dynamically adjusts GPU frequencies across multi-GPU nodes to stay within power limits. Leveraging built-in telemetry and advanced control algorithms, NPM operates in both bare-metal and KVM SR-IOV environments, making it adaptable to diverse workloads. AMD SMI integration allows users to monitor power allocation in real time.

Who Benefits?

This release isn’t just for research labs. Hyperscale AI providers will appreciate the topology-aware communication and SR-IOV/RAS features, while enterprises deploying multi-GPU nodes gain from NPM’s dynamic power control. Developers working with frameworks like PyTorch or Triton will benefit from ThinLTO support, which enables global optimizations without the build-time overhead of full LTO. And for those pushing the boundaries of low-precision AI, FP8/FP4 support in rocMLIR opens doors to higher throughput and efficiency.

The bottom line? ROCm 7.2 isn’t just an incremental update—it’s a step toward making AMD’s Instinct GPUs the platform of choice for production-grade AI. With optimizations spanning from kernel-level tuning to distributed communication, AMD is positioning itself as a serious contender in the AI infrastructure race.

Key Specs and Features

- FP8/FP4 Support: Enabled in rocMLIR and MIGraphX for next-gen AI workloads.

- GEMM Optimizations: Tuned for FP8, BF16, and FP16 on MI300X, MI350, and MI355 GPUs.

- GPUDirect Async (GDA): Removes CPU from critical communication paths in rocSHMEM.

- RCCL Topology Awareness: Supports 4-NIC networks, reducing cross-rail contention.

- SR-IOV/RAS Enhancements: Bad page avoidance, MMIO fuzzing protections for MI350X/MI355X.

- Node Power Management (NPM): Dynamic frequency adjustment for multi-GPU nodes.

- ThinLTO: Global optimizations with near-local build speed for AI frameworks.

- Model Optimizations: Llama 3.1 405B, Llama 3 70B, and GLM-4.6 tuned for MI300X/MI350 GPUs.

The release underscores AMD’s commitment to bridging the gap between research and production AI. For data centers running large-scale models, the combination of low-precision support, topology-aware communication, and power management could translate to significant cost and efficiency savings.

Availability details have not been confirmed, but developers can explore ROCm 7.2 through AMD’s official documentation and community resources.