Telecom networks are no longer just conduits for data; they are becoming the backbone of a new AI revolution. A coalition of global operators and technology partners has launched geographically distributed AI grids that execute inference tasks closer to users, drastically reducing latency and operational costs while opening up new revenue streams.

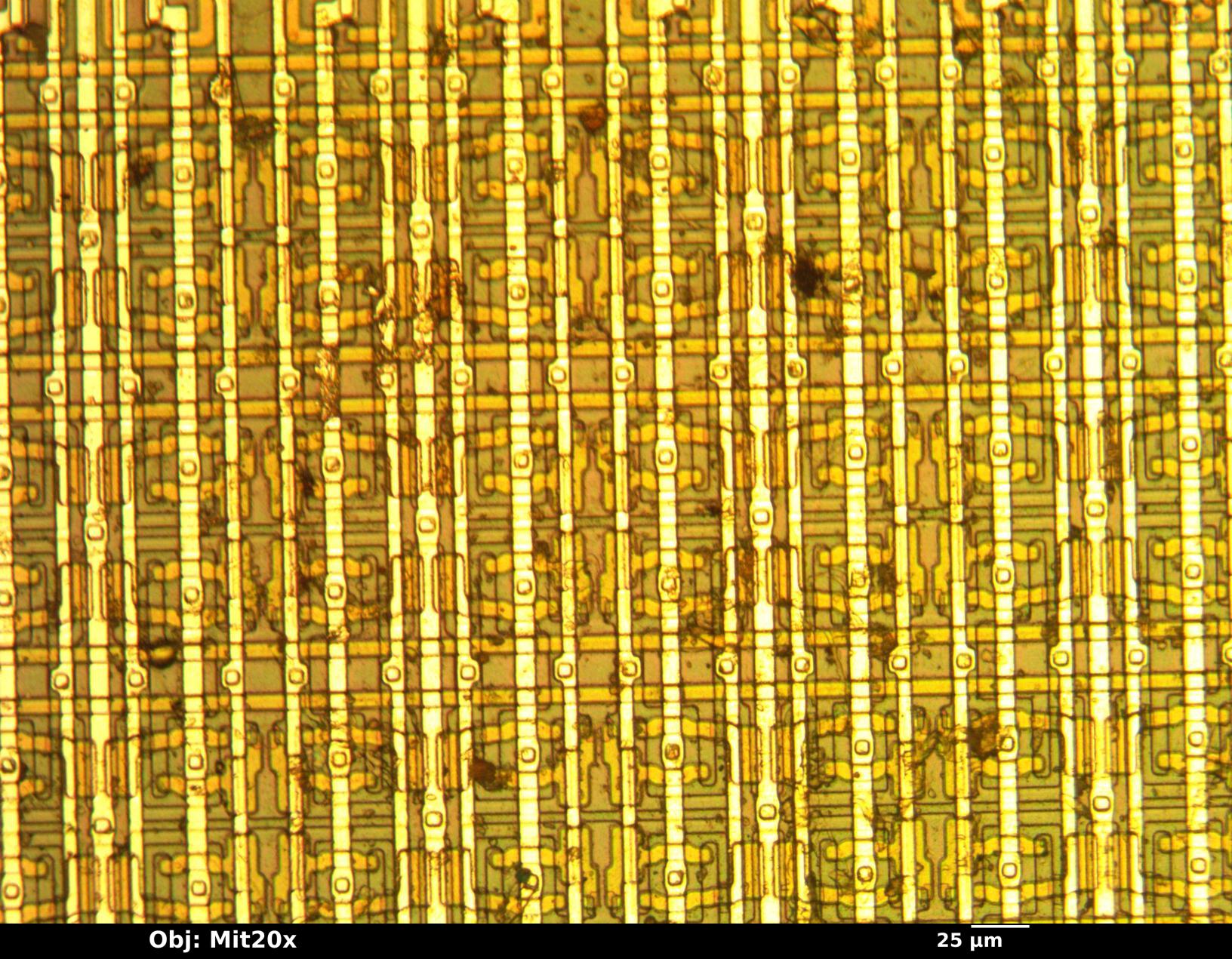

This transformation redefines the edge: 100,000 existing network sites—spanning regional hubs, mobile switching offices, and central offices—are being repurposed into a unified intelligence layer. With over 100 gigawatts of spare power capacity, these grids promise to monetize AI at scale while addressing the dual challenges of cost-per-token efficiency and real-time response.

From Wires to Workloads

The approach varies by operator. Some are retrofitting wired edge sites with AI-optimized hardware, while others integrate AI directly into radio access networks (AI-RAN) to handle both connectivity and inference workloads on the same grid. The result is a platform capable of supporting mission-critical applications—from public safety to cloud gaming—without relying solely on centralized data centers.

Key Players and Use Cases

- Akamai: Expanding its Inference Cloud to 4,400 edge locations with NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs, Akamai matches requests to optimal compute tiers. This improves token economics for applications like gaming, financial services, and retail.

- Comcast: Leveraging its 1,000+ edge data centers, Comcast is deploying an AI grid for hyper-personalized media. The system maintains responsiveness during peak demand, reducing cost-per-token while supporting NVIDIA GeForce NOW cloud gaming and conversational agents.

- Indosat Ooredoo Hutchison: Connecting sovereign AI factories across Indonesia, the operator is running Sahabat-AI—a Bahasa Indonesia platform—on a localized grid. This gives developers a culturally compliant, low-latency environment for building AI applications tailored to Indonesia’s diverse geography.

- Spectrum: Using remote GPUs embedded in its fiber network, Spectrum is targeting high-resolution graphics for media production. Latency stays under 10 milliseconds, ensuring seamless workflows even with distributed teams.

Why It Matters

The implications are twofold: operational and strategic. Operationally, running inference at the edge cuts costs by up to 50% per token while maintaining sub-500-millisecond latency for conversational agents. Strategically, telecoms are moving from infrastructure providers to AI value-chain participants—monetizing spare capacity while reducing reliance on cloud giants.

What’s Next

The NVIDIA AI Grid Reference Design provides the blueprint, but adoption hinges on ecosystem maturity. Partners like Cisco, HPE, and orchestration platforms (Armada, Rafay) are building control planes to manage distributed workloads seamlessly. Developers are already piloting use cases—from smart-city vision AI to interactive video generation—but scalability remains untested at national levels.

The most critical change is undeniable: telecom networks are no longer just pipelines for data. They’ve become the foundation for distributed AI, and the race to build these grids is just beginning.