SambaNova has thrown down the gauntlet to NVIDIA and AMD with the launch of its SN50 AI accelerator, a chip designed to redefine enterprise inference at scale. The company claims the SN50 delivers five times the compute per accelerator compared to rivals, while cutting total cost of ownership by one-third—a bold claim in a market where GPUs still reign supreme. But whether this translates into real-world adoption remains an open question.

Backed by a $350 million Series E funding round—led by Vista Equity Partners and Cambium Capital—and a multi-year collaboration with Intel, SambaNova is betting that its Reconfigurable Data Unit (RDU) architecture can outperform traditional GPU-based solutions. The partnership with Intel, which includes a strategic investment, aims to integrate SambaNova’s systems with Intel’s Xeon CPUs, GPUs, and networking tech to create a heterogeneous AI infrastructure—one that could challenge the dominance of GPU-centric data centers.

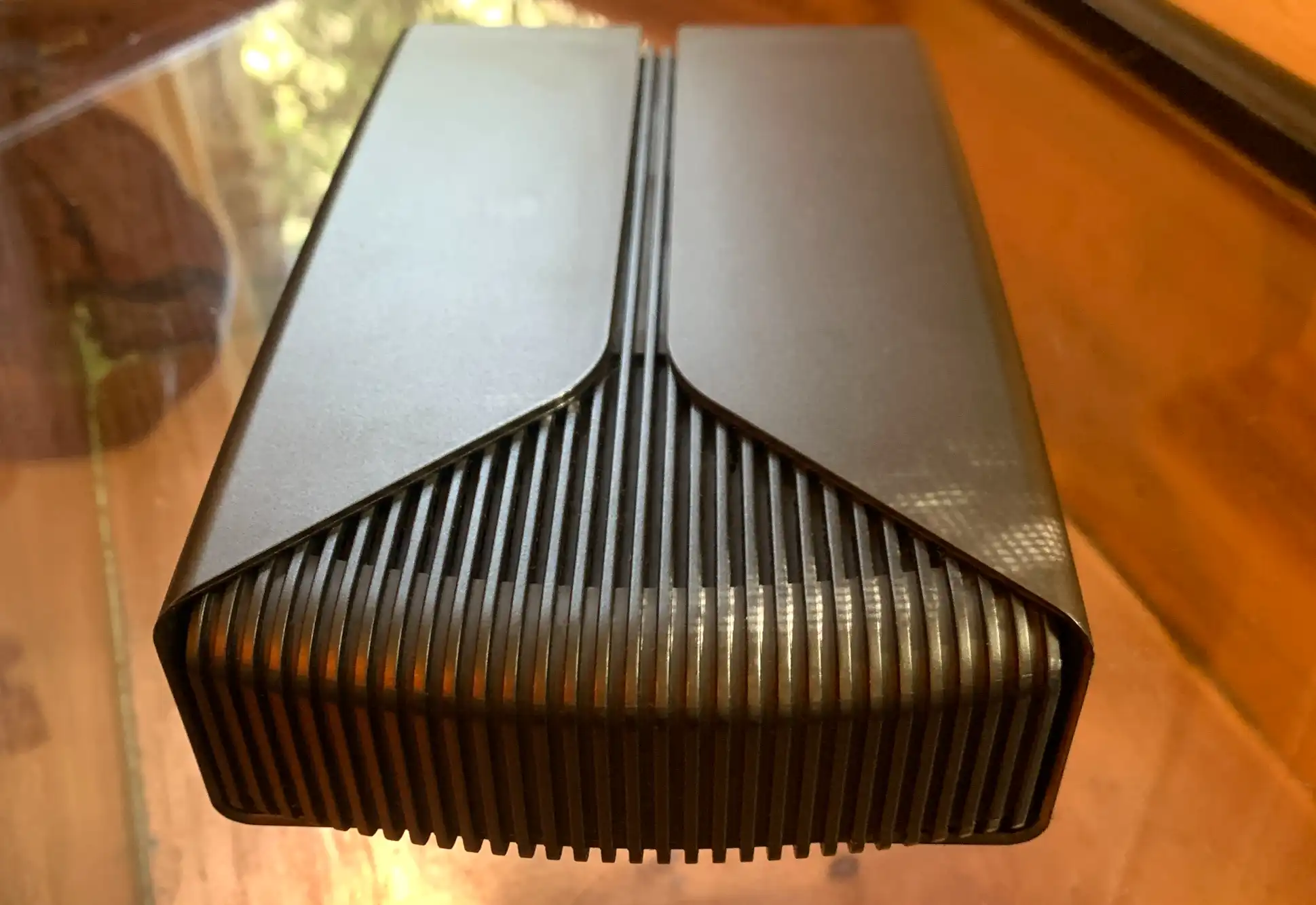

Yet, the road ahead is far from smooth. While SambaNova touts ultra-low latency and multi-terabyte-per-second interconnects linking up to 256 accelerators, the company must prove it can deliver on performance benchmarks that match—or exceed—NVIDIA’s H100 or AMD’s Instinct MI300X in real-world deployments. The SN50’s three-tier memory architecture, which supports models with over 10 trillion parameters and 10 million-token contexts, is a technical feat, but cost-sensitive enterprises may still hesitate to abandon GPUs without ironclad evidence of superior efficiency.

The Intel Gambit

Intel’s involvement is a strategic pivot for both companies. For SambaNova, Intel’s global distribution network and enterprise credibility could accelerate adoption in sectors where GPU alternatives are desperately sought. For Intel, the partnership is part of a broader push to regain ground in AI acceleration—a domain where it has long trailed NVIDIA.

Kevork Kechichian, Intel’s EVP and GM of the Data Center Group, framed the collaboration as a response to customer demand for more choice in AI infrastructure. The joint effort will focus on three key areas: scaling SambaNova’s AI cloud, integrating Intel’s hardware into SambaNova’s systems, and co-marketing solutions through Intel’s enterprise channels. But whether this will translate into meaningful market share gains remains uncertain.

The collaboration also includes Intel’s investment in SambaNova’s AI cloud expansion, which will run on Intel Xeon-based infrastructure. This could position SambaNova as a viable alternative for enterprises looking to avoid vendor lock-in with NVIDIA’s CUDA ecosystem. However, Intel’s own AI accelerator roadmap—including its Gaudi 3 and upcoming Maia chips—could create internal competition for SambaNova’s business.

SoftBank’s Early Bet

SoftBank Corp. has emerged as SambaNova’s first major customer, deploying the SN50 in its next-generation AI data centers in Japan. The move underscores SambaNova’s ambitions in sovereign AI deployments, where latency, cost, and data residency are critical factors. SoftBank’s decision to standardize on SN50 suggests confidence in SambaNova’s ability to deliver low-latency inference for both open-source and proprietary models—a key differentiator in a market dominated by GPU-based solutions.

Yet, SoftBank’s adoption is still an outlier. Most enterprises remain deeply invested in GPU-based AI infrastructure, and convincing them to switch requires more than technical specs—it demands proven performance in real-world workloads. SambaNova’s claims of three times lower total cost of ownership and air-cooled efficiency are compelling, but without third-party benchmarks, skepticism persists.

What’s at Stake?

The SN50’s success hinges on several unanswered questions

- Can it match GPU performance? While SambaNova highlights five times the compute per accelerator, independent benchmarks are lacking. Enterprises won’t abandon GPUs without proof of superior throughput, especially in mixed workloads.

- Will Intel’s ecosystem adoption be enough? Intel’s partnership is a critical validation, but SambaNova must ensure its systems integrate seamlessly with Intel’s hardware—something that hasn’t been thoroughly tested in production environments.

- Is the funding enough for scale? The $350 million raises production capacity, but scaling SN50 manufacturing to compete with TSMC or Samsung’s GPU-focused foundries will require significant investment in R&D and supply chain partnerships.

- Can it crack sovereign markets? SoftBank’s deployment is a strong signal, but SambaNova must replicate this success in Europe, the Middle East, and Asia, where data sovereignty and latency concerns are driving demand for non-GPU alternatives.

Landon Downs of Cambium Capital, one of the lead investors, described the SN50 as a turning point for agentic AI, where real-time responsiveness and cost efficiency are non-negotiable. But the proof will come when enterprises like financial institutions, telecoms, and energy firms adopt SN50 at scale—something that won’t happen overnight.

A GPU Challenger Emerges?

SambaNova’s bet is that AI inference is evolving beyond raw model size and toward infrastructure efficiency. The SN50’s multi-model memory and agentic caching are designed to optimize costs for enterprises running thousands of simultaneous AI sessions—a use case where GPUs often struggle with latency and power consumption.

Yet, NVIDIA’s dominance in AI inference remains unshaken. The company’s NVLink interconnects, TensorRT optimizations, and CUDA ecosystem give it an insurmountable lead in many enterprise deployments. SambaNova’s challenge is to demonstrate that its RDU architecture can deliver comparable—or better—performance without locking customers into a proprietary stack.

With shipments expected later this year, the SN50’s real test begins. If SambaNova can deliver on its promises, it could carve out a significant niche in AI inference. If not, it risks becoming another high-profile but ultimately unsuccessful challenge to NVIDIA’s AI monopoly.

The stakes are high—not just for SambaNova and Intel, but for the entire AI infrastructure landscape. In a market where agentic AI is the next frontier, the race isn’t just about building bigger models. It’s about who can light up data centers with instant, cost-effective, and scalable intelligence—and SambaNova is betting it has the chip to win that race.