For users running AI agents like OpenClaw, NVIDIA’s latest hardware upgrades deliver a game-changing shift: local AI processing that rivals cloud-based performance. The company has optimized its GeForce RTX GPUs and DGX Spark systems to handle OpenClaw’s demanding workloads—thanks to a combination of expanded memory capacity and AI-focused hardware acceleration.

The move positions NVIDIA’s hardware as a viable alternative to cloud-based AI agents, offering real-time responsiveness without latency. OpenClaw, known for its 'local-first' architecture, now runs natively on NVIDIA’s platforms, unlocking features like personalized project management, autonomous email handling, and research assistance—all powered by models that can scale from 4B parameters on entry-level GPUs to massive 120B-parameter models on DGX Spark’s 128GB memory pool.

Why This Matters: Memory and Speed Redefined

OpenClaw’s performance hinges on two critical factors: memory capacity and compute efficiency. NVIDIA’s latest optimizations address both

- Memory: DGX Spark’s 128GB of HBM3 memory enables deployment of massive models like gpt-oss-120B locally, while RTX GPUs (starting at 8GB) support smaller but still capable 4B-parameter models.

- Compute: The NVFP4 instruction set, introduced in recent RTX GPUs, delivers 3x faster creative AI performance and 35% faster LLM inference compared to prior generations. DGX Spark, meanwhile, has seen a 2.5x performance boost since launch, making it a powerhouse for AI workloads.

- Software Stack: NVIDIA’s guide simplifies setup via Windows Subsystem for Linux (WSL) and tools like LM Studio or Ollama for local LLM configuration.

This isn’t just about raw numbers—it’s about transforming a standard PC into an AI workstation. For example, a user with a DGX Spark can run OpenClaw as a 'personal secretary,' drafting emails, managing calendars, and even generating reports by combining web searches with private documents—all without sending data to external servers.

Who Benefits?

The upgrade primarily targets three groups

- Power Users and Creators: Those running local AI agents for tasks like video editing, code generation, or research will see faster iteration times and lower costs than cloud-based alternatives.

- Enterprise and Developers: Teams working with proprietary data can now deploy OpenClaw on-premises, avoiding compliance risks associated with cloud uploads.

- Gamers and Enthusiasts: RTX AI GPUs (like the RTX 50 series) unlock creative AI tools for game modding, asset generation, and real-time rendering—all while maintaining gaming performance.

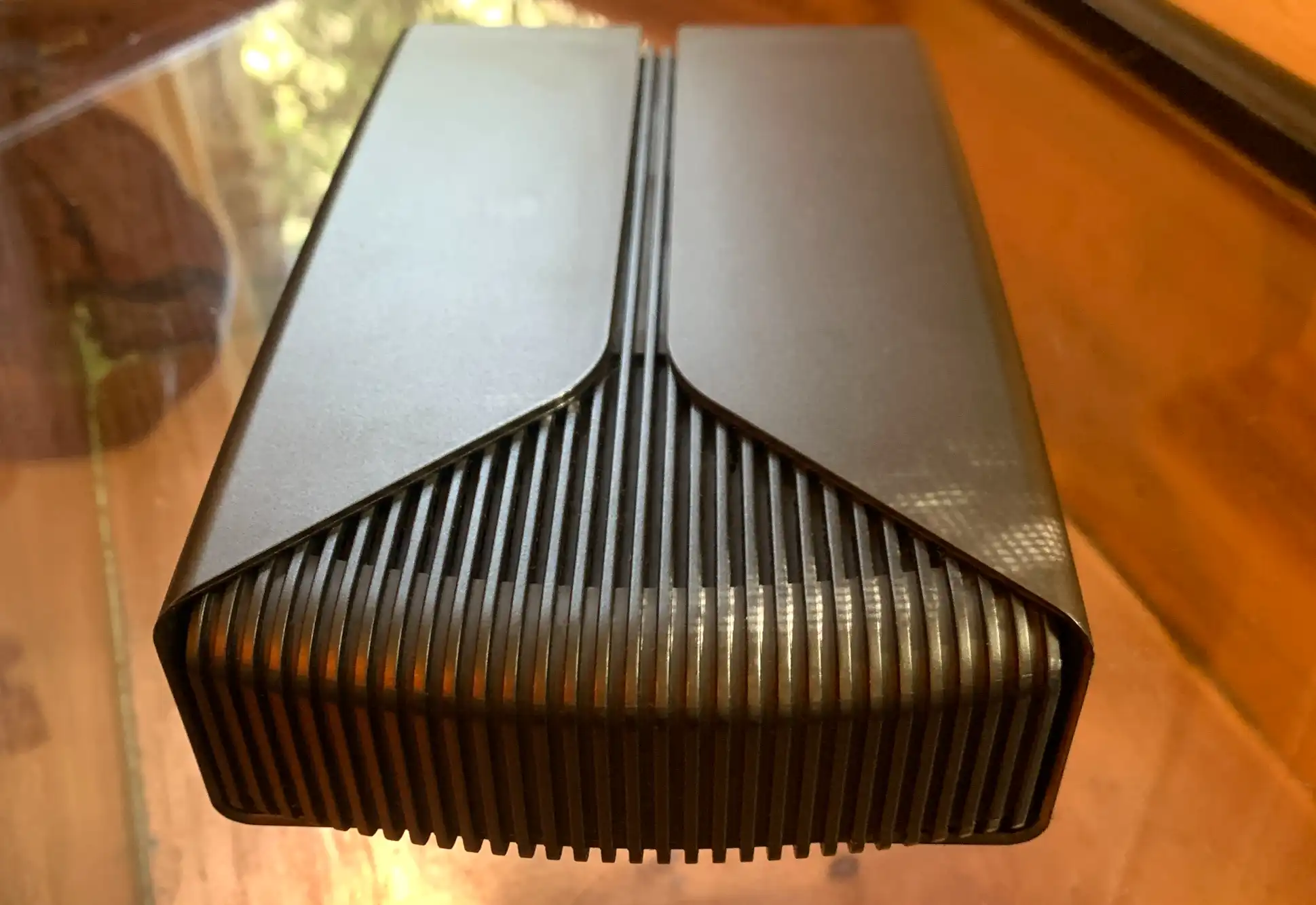

The tradeoff? Higher-end models like DGX Spark require significant power and cooling, while entry-level GPUs may struggle with the largest models. However, NVIDIA’s tiered approach ensures flexibility: smaller GPUs (12GB+) handle lightweight tasks, while DGX Spark’s 128GB memory opens doors to enterprise-grade AI.

What’s Next?

NVIDIA has published a detailed guide for setting up OpenClaw on compatible hardware, covering everything from WSL configuration to model pairing based on GPU memory. The focus on local AI aligns with broader industry trends toward privacy-preserving, low-latency AI—particularly relevant for CES 2026, where NVIDIA is expected to highlight advancements in AI acceleration.

For now, the technology remains accessible to RTX GPU owners and DGX Spark users, with no additional hardware purchases required. The key takeaway: NVIDIA’s hardware isn’t just for gamers anymore—it’s a platform for building AI-driven workflows at home.