For companies deploying AI at scale, the cost of processing individual tokens—the building blocks of AI interactions—has become a critical bottleneck. A single diagnostic analysis in healthcare, a line of dialogue in an AI-driven game, or a customer service response all hinge on how efficiently those tokens are generated and interpreted.

Now, a new wave of optimization is emerging. By running open-source models on NVIDIA’s Blackwell architecture, some of the largest inference providers are cutting per-token costs by as much as 10x, according to recent benchmarks. This development could democratize AI deployment, making it feasible for smaller enterprises to adopt sophisticated models without exorbitant infrastructure expenses.

The implications span industries. In healthcare, where AI-assisted diagnostics rely on parsing vast datasets, reduced token costs could accelerate adoption. Game developers might integrate more dynamic, AI-driven narratives without compromising performance. Even customer service platforms, which increasingly use AI to handle inquiries, could see lower operational costs per interaction.

The Blackwell Advantage

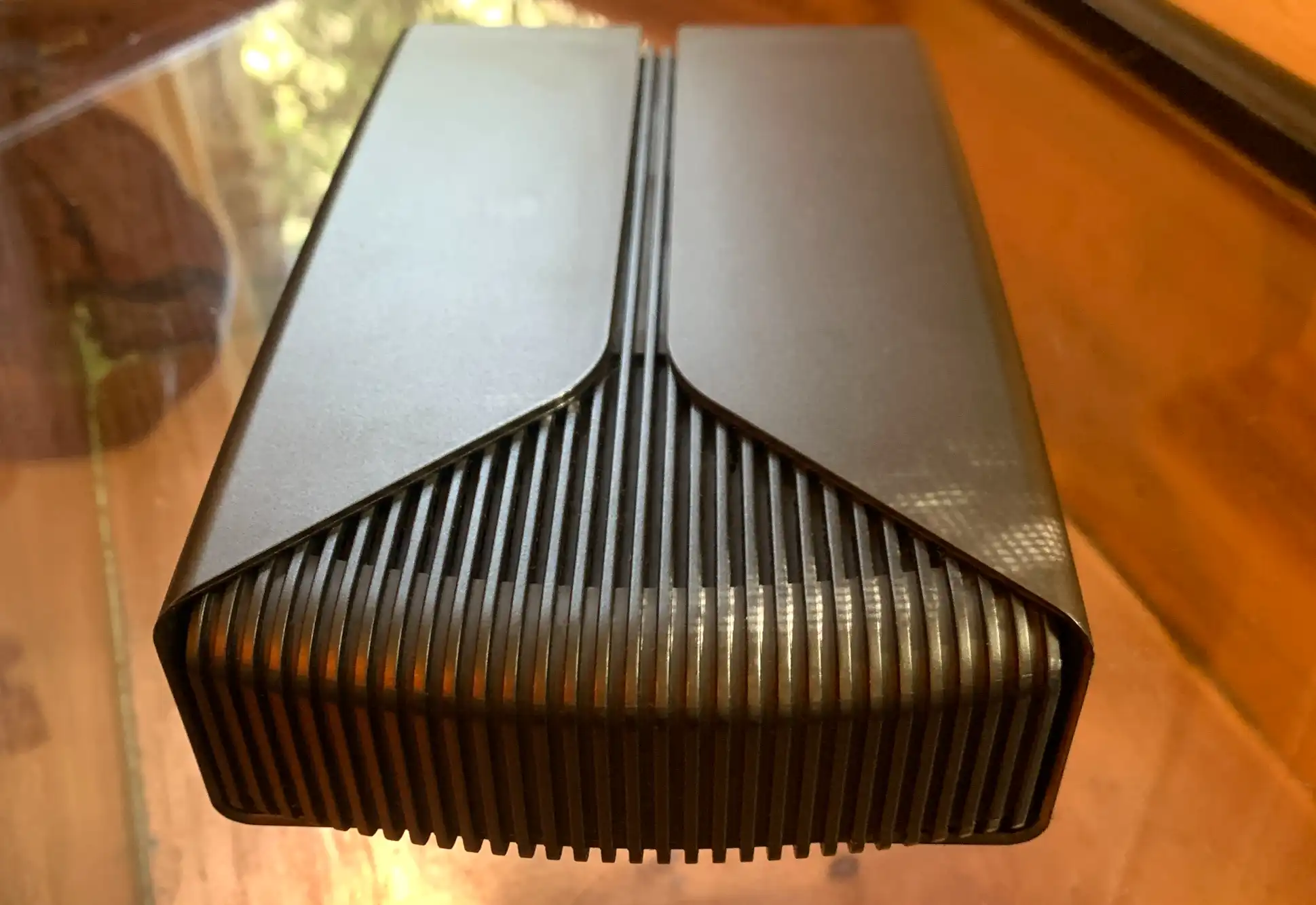

NVIDIA’s Blackwell platform, designed for next-generation AI workloads, delivers significant improvements in efficiency. The architecture supports 8x Tensor Cores with FP8 precision, enabling faster processing while reducing power consumption. When paired with open-source models—such as those optimized for inference-heavy tasks—the result is a dramatic drop in computational overhead.

For example, running a large language model for customer service queries could cost $0.00001 per 1,000 tokens on Blackwell, compared to $0.0001 or more on previous generations. While exact figures vary by model and use case, the trend is clear: Blackwell’s combination of hardware acceleration and software optimizations is redefining the economics of AI inference.

Who Stands to Gain?

The cost reductions aren’t limited to tech giants. Startups and mid-sized companies that previously avoided AI due to prohibitive costs may now find entry points. Healthcare providers could deploy AI diagnostics more widely, while gaming studios might afford richer, more responsive AI characters. Even industries like finance, where AI-driven fraud detection relies on real-time token processing, could see operational savings.

Yet challenges remain. Open-source models, while cost-effective, may require additional tuning for enterprise-grade reliability. Latency-sensitive applications—such as real-time customer interactions—will need careful benchmarking to ensure Blackwell’s performance meets expectations. Still, the potential for 10x cost savings is a compelling incentive for businesses to reevaluate their AI strategies.

The shift toward Blackwell-powered inference also underscores a broader trend: the growing synergy between open-source innovation and proprietary hardware. As more providers adopt this model, the barrier to AI adoption could continue to fall, unlocking new possibilities across sectors.