Behind the scenes of Google's latest data center innovation lies a carefully orchestrated blend of cutting-edge hardware designed to propel agentic AI systems into new performance territories. The AI Hypercomputer doesn't merely stack existing technologies; it integrates them in a way that addresses the growing complexity of modern AI workloads.

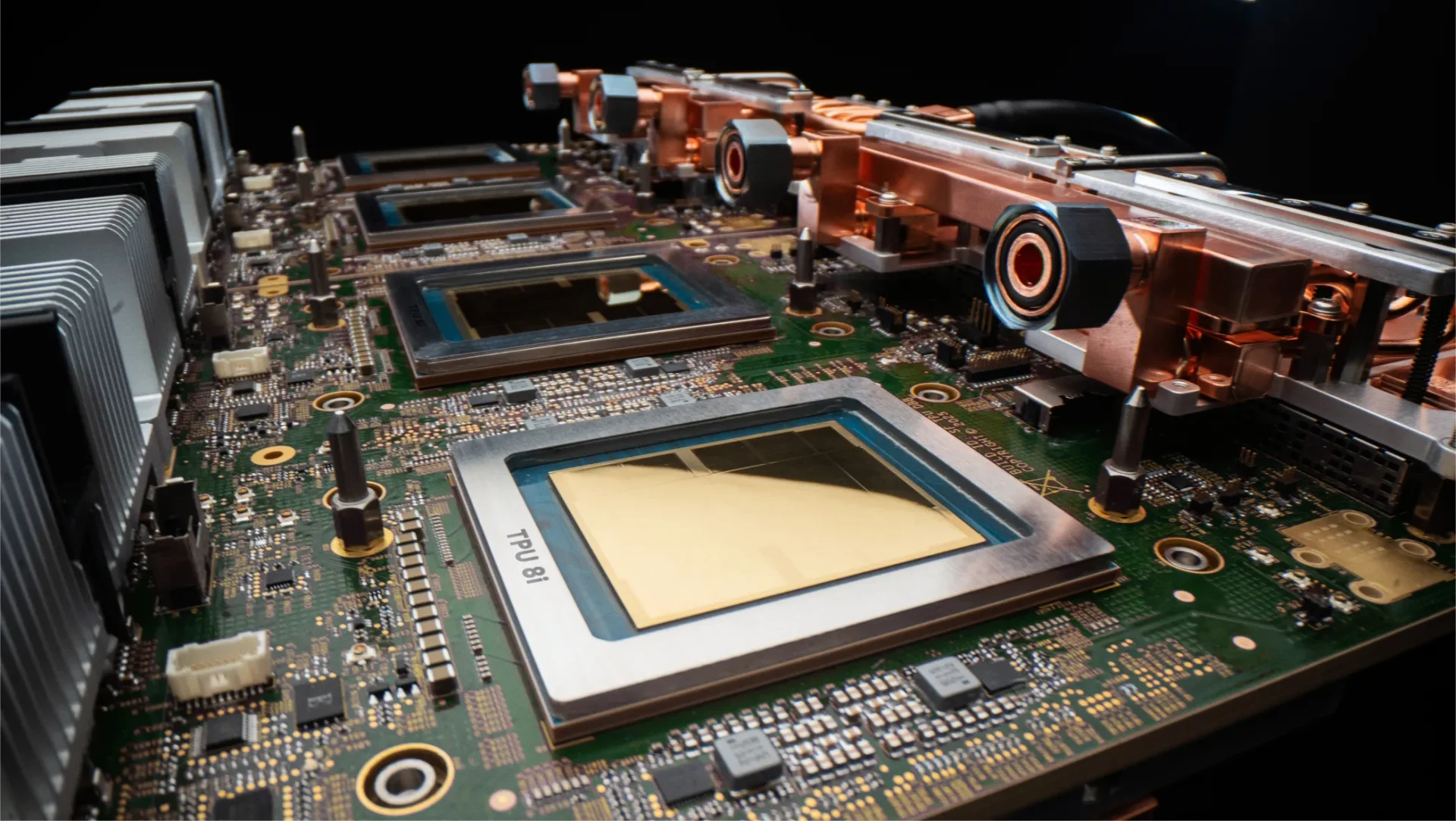

At its core, the system combines 8th-generation Tensor Processing Units (TPUs) with NVIDIA Rubin GPUs and Axion CPUs. While TPUs continue to dominate tasks requiring massive parallel matrix operations—such as those found in deep learning—the Rubin GPUs bring broader computational flexibility, handling general-purpose workloads that traditional TPUs struggle with. The Axion CPUs, still in their developmental phase, promise to serve as a unifying layer, potentially reducing the need for separate systems by consolidating different processing requirements into one cohesive architecture.

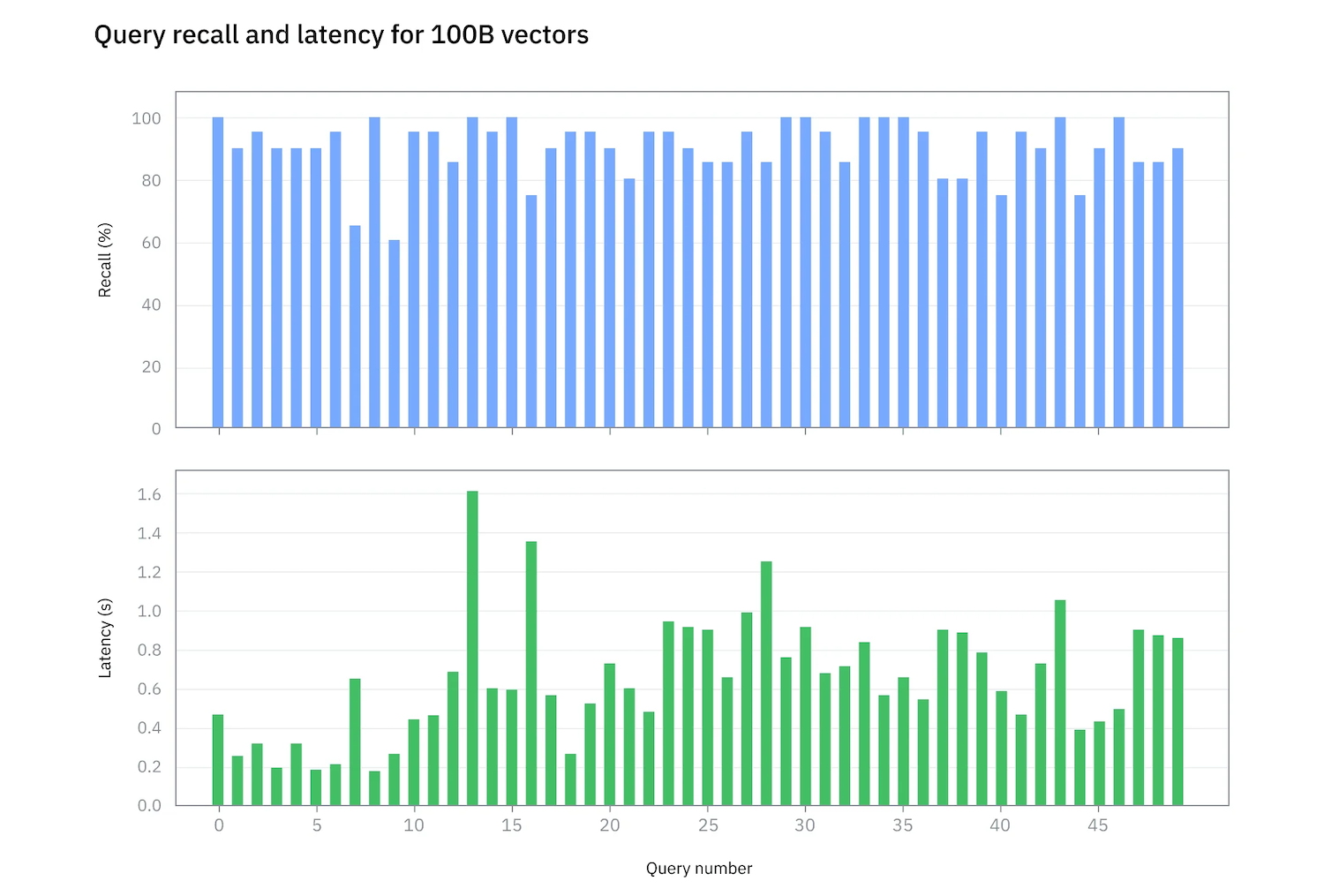

This hybrid approach is particularly notable in an industry where specialization often leads to fragmentation. Unlike previous generations of AI hardware that favored either extreme—either raw throughput or specialized efficiency—the AI Hypercomputer aims to strike a balance. For organizations investing in large-scale AI deployments, this could mean reduced latency for certain tasks while maintaining the performance gains associated with TPUs, all without sacrificing flexibility.

The implications for power users and enterprises are significant. The ability to dynamically allocate workloads across TPUs, GPUs, and future Axion CPUs suggests a more adaptive infrastructure, one that can scale efficiently as AI models grow in size and complexity. Competitors may need to reconsider their own roadmaps, particularly if Google's integration proves to be both stable and performant.

Yet, challenges remain. The Axion CPUs, while promising, are still unproven at scale, leaving some questions about how they will integrate with the existing TPU-GPU pairing. If successful, however, this architecture could set a new standard for AI compute, one that prioritizes modularity and adaptability over rigid specialization.

As the race to build more capable agentic systems accelerates, Google's move underscores the need for hardware that can evolve alongside software demands. Whether this hypercomputer becomes the blueprint for future generations or merely a stepping stone remains to be seen—but its potential to reshape AI infrastructure is undeniable.